Democrats on the House Intelligence Committee on Thursday released more than 3,000 Facebook ads that have been linked to the “Russian troll factory” known as the Internet Research Agency. The ads were provided to the committee by Facebook as part of a congressional investigation into Russian attempts to influence the 2016 election. But the main impression one gets from reading through some of the 3,519 files is just how innocuous most of them were. Could they have swayed people during the election? Perhaps. But it’s difficult to see how.

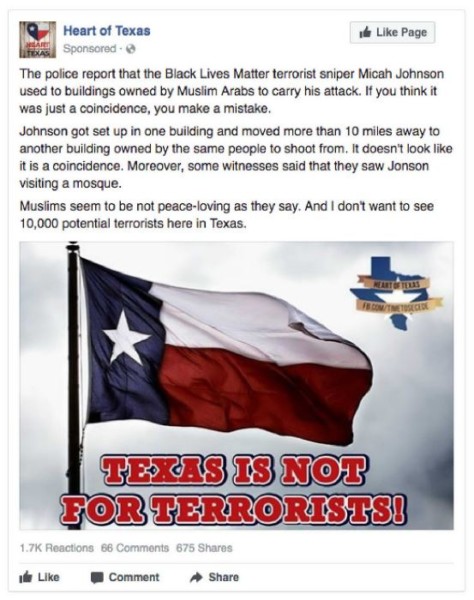

Rep. Adam Schiff, the ranking Democrat on the House committee, said in a prepared statement that he chose to release the ads because “the only way we can begin to inoculate ourselves against a future attack is to see first-hand the types of messages, themes and imagery the Russians used to divide us.” And it is certainly fascinating to see how the IRA ads played on divisive topics such as Black Lives Matter, images of American patriotism and pro-LGBTQ content. But determining what kind of actual impact they might have had is much more difficult.

A random walk through some of the 3,000-plus files provided by the committee shows that the vast majority got a tiny, tiny number of “impressions” — which simply means they appeared in someone’s news feed. And most got an equally minuscule number of clicks, and in some cases none at all. Did simply viewing these ads cause anyone to change their mind about Donald Trump or Hillary Clinton, or about issues like immigration or Black Lives Matter?

Experts in this type of disinformation and propaganda warfare, which has been going on since before the internet and social media were invented, say pushing people in a specific direction often isn’t the point. As Facebook noted in an internal security report released last year, much of the activity involving fake social-media accounts spreading misinformation didn’t seem to have any specific goal, but instead appeared to be designed simply to sow confusion.

“We identified malicious actors on Facebook who, via inauthentic accounts, actively engaged across the political spectrum, with the apparent intent of increasing tensions between supporters of these groups and fracturing their supportive base.”

To take one comical example that was highlighted during the congressional hearings into Russian influence on social-media platforms in November, a Facebook group run by the Internet Research Agency and pretending to be a US organization called Heart of Texas announced an anti-Muslim rally in Washington, and a separate Russian-linked group pretending to be a pro-Muslim organization announced its own rally on the same day, at the exact same location.

Did all of this theater actually persuade anyone of anything, or cause them to vote a specific way, or not to vote at all? That’s almost impossible to know. We know that Russian trolls spent a tiny sum of money, about $100,000 or so, on their campaign. But we also know that ads are the tip of the iceberg, since any post on Facebook can function like an ad, provided it gets promoted by the News Feed algorithm. About 12 million people saw the ads, but according to Facebook, more than 150 million saw “organic” content posted by Russian-linked agents.

In a sense, critics who argue that this haphazard Russian misinformation campaign did sway the election are among Facebook’s biggest fans, because that argument assumes Facebook ads are hugely powerful and capable of affecting human behavior on a large scale. The reality is that we don’t know what real-world impact they had, and we may never know.

Mathew Ingram is CJR’s chief digital writer. Previously, he was a senior writer with Fortune magazine. He has written about the intersection between media and technology since the earliest days of the commercial internet. His writing has been published in the Washington Post and the Financial Times as well as by Reuters and Bloomberg.