New York‘s Andre Tartar has a intriguing post on the biggest American corporations and how much their employment levels have changed over the last half a century. His conclusion:

It’s a stark illustration of a hard truth: Being a top American business no longer necessarily means employing lots of American workers.

The Atlantic‘s Derek Thompson riffs on that:

Fifty years ago, the four most valuable U.S. companies employed an average of 430,000 people with an average market cap of $180 billion. This year, the four largest U.S. companies employ an average 120,000 people with an average market cap of $334 billion. The titans of 2011 have twice the the value of their 1964 counterparts with a quarter of the employees.

This is clearly not a scientific study, but it’s an interesting snapshot, at least. I’d be interested in seeing the number for a broader swath of companies, like the S&P 500, where you could get something statistically significant results.

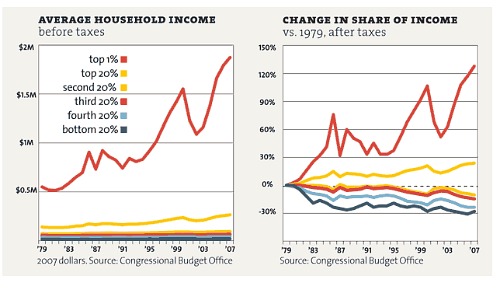

But it seems to show anecdotally how workers (and robots/computers) have gotten more productive and how corporations have gotten more efficient. You’d expect companies to get more production out of each worker over fifty years, and that should be a good thing if benefits are distributed fairly (which, of course, as you see in the chart below, they are not).

What’s going on here? One likely answer comes from David Runciman’s review of Jacob Hacker and Paul Pierson’s new book in the London Review of Books (emphasis mine):

The real beneficiaries of the explosion in income for top earners since the 1970s has been not the top 1 per cent but the top 0.1 per cent of the general population. Since 1974, the share of national income of the top 0.1 per cent of Americans has grown from 2.7 to 12.3 per cent of the total, a truly mind-boggling level of redistribution from the have-nots to the haves. Who are these people? As Hacker and Pierson note, they are ‘not, for the most part, superstars and celebrities in the arts, entertainment and sports. Nor are they rentiers, living off their accumulated wealth, as was true in the early part of the last century. A substantial majority are company executives and managers, and a growing share of these are financial company executives and managers.’

Clearly the productivity gains of the last thirty-plus years, at least, haven’t gone to workers. The bottom 80 percent has seen its share of after-tax income plunge since 1979 while the top 1 percent has soared:

So where did all those workers go?

Have they gone into the service economy, with its mostly lower-paying jobs? It’s worth noting that Walmart is now in the top 10 and it has 2.1 million employees and is worth less per employee than Sears was in 1964 when it was a top 10 company.

I’d guess that one of the other major differences between these groups of companies is that companies now outsource more of their production to non-employees than they did in 1964. In other words, these companies probably produce more jobs than meets the eye.

But that’s offset at least somewhat by offshoring, as Tartar points out:

The respective figures for 2011 and 1964 are even more striking considering that today’s U.S. workforce is twice as large as 1964’s and a third of U.S.-based corporations’ employees are overseas.

So what’s behind the difference between the 1964 and 2011 numbers and do they apply more broadly to big American companies? It’d be nice to see some reporting on that.

One of the big blind spots of financial journalism is historical perspective. Virtually none of us were working in 1964, much less alive then.

But I suspect it’s worse than that. Whatever institutional and historical knowledge the press once had has been gutted by buyouts and layoffs in the last several years.

Ryan Chittum is a former Wall Street Journal reporter, and deputy editor of The Audit, CJR’s business section. If you see notable business journalism, give him a heads-up at rc2538@columbia.edu. Follow him on Twitter at @ryanchittum.