In the past few months we have been deluged with headlines about new AI tools and how much they are going to change society.

Some reporters have done amazing work holding the companies developing AI accountable, but many struggle to report on this new technology in a fair and accurate way.

We—an investigative reporter, a data journalist, and a computer scientist—have firsthand experience investigating AI. We’ve seen the tremendous potential these tools can have—but also their tremendous risks.

As their adoption grows, we believe that, soon enough, many reporters will encounter AI tools on their beat, so we wanted to put together a short guide to what we have learned.

So we’ll begin with a simple explanation of what they are.

In the past, computers were fundamentally rule-based systems: if a particular condition A is satisfied, then perform operation B. But machine learning (a subset of AI) is different. Instead of following a set of rules, we can use computers to recognize patterns in data.

For example, given enough labeled photographs (hundreds of thousands or even millions) of cats and dogs, we can teach certain computer systems to distinguish between images of the two species.

This process, known as supervised learning, can be performed in many ways. One of the most common techniques used recently is called neural networks. But while the details vary, supervised learning tools are essentially all just computers learning patterns from labeled data.

Similarly, one of the techniques used to build recent models like ChatGPT is called self-supervised learning, where the labels are generated automatically.

Be skeptical of PR hype

People in the tech industry often claim they are the only people who can understand and explain AI models and their impact. But reporters should be skeptical of these claims, especially when coming from company officials or spokespeople.

“Reporters tend to just pick whatever the author or the model producer has said,” Abeba Birhane, an AI researcher and senior fellow at the Mozilla Foundation, said. “They just end up becoming a PR machine themselves for those tools.”

In our analysis of AI news, we found that this was a common issue. Birhane and Emily Bender, a computational linguist at the University of Washington, suggest that reporters talk to domain experts outside the tech industry and not just give a platform to AI vendors hyping their own technology. For instance, Bender recalled that she read a story quoting an AI vendor claiming their tool would revolutionize mental health care. “It’s obvious that the people who have the expertise about that are people who know something about how therapy works,” she said.

In the Dallas Morning News’s series of stories on Social Sentinel, the company repeatedly claimed its model could detect students at risk of harming themselves or others from their posts on popular social media platforms and made outlandish claims about the performance of their model. But when reporters talked to experts, they learned that reliably predicting suicidal ideation from a single post on social media is not feasible.

Many editors could also choose better images and headlines, said Margaret Mitchell, chief ethics scientist of the AI company Hugging Face, said. Inaccurate headlines about AI often influence lawmakers and regulation, which Mitchell and others then have to try to fix.

“If you just see headline after headline that are these overstated or even incorrect claims, then that’s your sense of what’s true,” Mitchell said. “You are creating the problem that your journalists are trying to report on.”

Question the training data

After the model is “trained” with the labeled data, it is evaluated on an unseen data set, called the test or validation set, and scored using some sort of metric.

The first step when evaluating an AI model is to see how much and what kind of data the model has been trained on. The model can only perform well in the real world if the training data represents the population it is being tested on. For example, if developers trained a model on ten thousand pictures of puppies and fried chicken, and then evaluated it using a photo of a salmon, it likely wouldn’t do well. Reporters should be wary when a model trained for one objective is used for a completely different objective.

In 2017, Amazon researchers scrapped a machine learning model used to filter through résumés, after they discovered it discriminated against women. The culprit? Their training data, which consisted of the résumés of the company’s past hires, who were predominantly men.

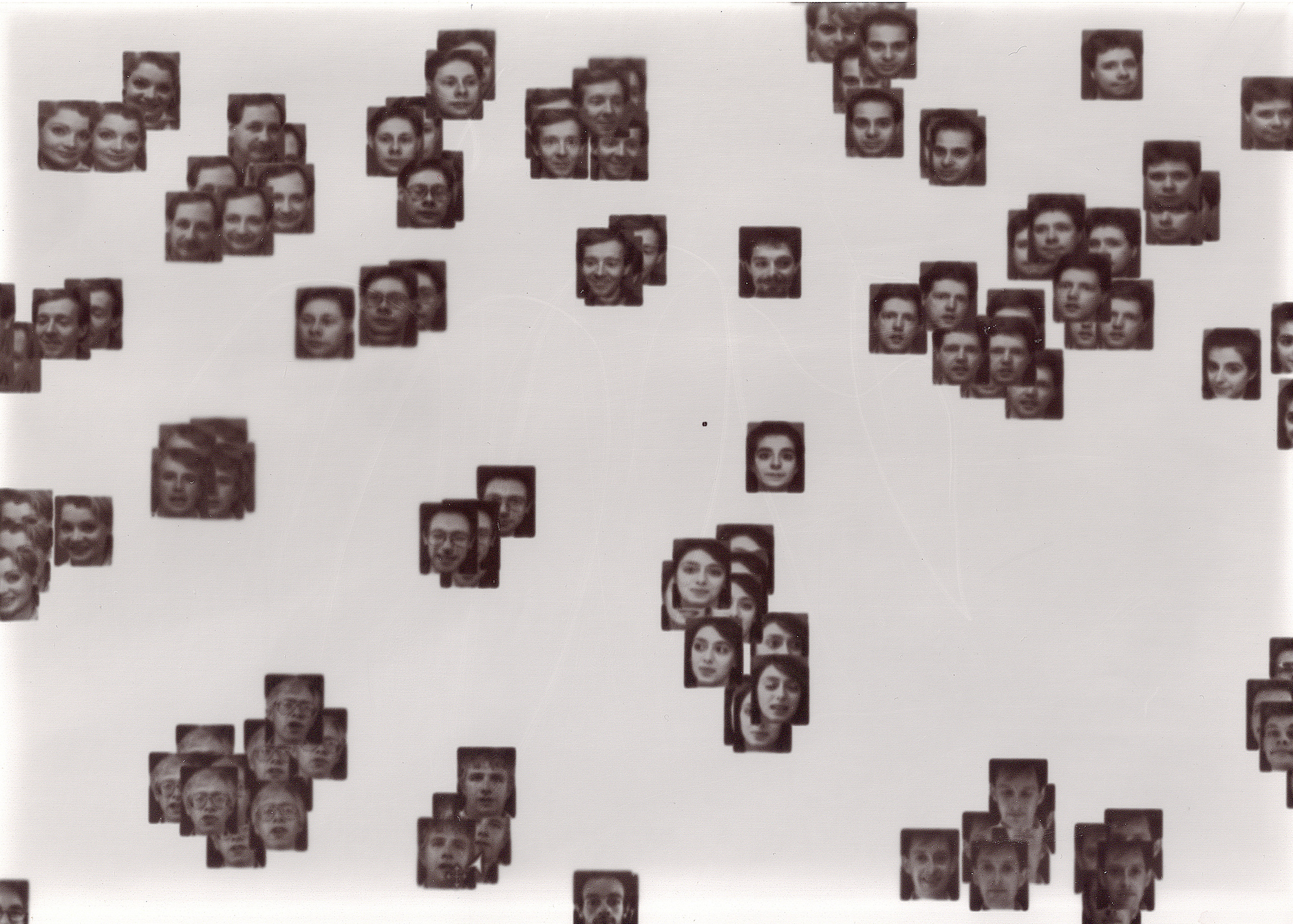

Data privacy is another concern. In 2019, IBM released a data set with the faces of a million people. The following year a group of plaintiffs sued the company for including their photographs without consent.

Nicholas Diakopoulos, a professor of communication studies and computer science at Northwestern, recommends that journalists ask AI companies about their data collection practices and if subjects gave their consent.

Reporters should also consider the company’s labor practices. Earlier this year, Time magazine reported that OpenAI paid Kenyan workers $2 an hour for labeling offensive content used to train ChatGPT. Bender said these harms should not be ignored.

“There’s a tendency in all of this discourse to basically believe all of the potential of the upside and dismiss the actual documented downside,” she said.

Evaluate the model

The final step in the machine learning process is for the model to output a guess on the testing data and for that output to be scored. Typically, if the model achieves a good enough score, it is deployed.

Companies trying to promote their models frequently quote numbers like “95 percent accuracy.” Reporters should dig deeper here and ask if the high score only comes from a holdout sample of the original data or if the model was checked with realistic examples. These scores are only valid if the testing data matches the real world. Mitchell suggests that reporters ask specific questions like “How does this generalize in context?” “Was the model tested ‘in the wild’ or outside of its domains?”

It’s also important for journalists to ask what metric the company is using to evaluate the model—and whether that is the right one to use. A useful question to consider is whether a false positive or false negative is worse. For example, in a cancer screening tool, a false positive may result in people getting an unnecessary test, while a false negative might result in missing a tumor in its early stage when it is treatable.

The difference in metrics can be crucial to determine questions of fairness in the model. In May 2016, ProPublica published an investigation in an algorithm called COMPAS, which aimed to predict a criminal defendant’s risk of committing a crime within two years. The reporters found that, despite having similar accuracy between Black and white defendants, the algorithm had twice as many false positives for Black defendants as for white defendants.

The article ignited a fierce debate in the academic community over competing definitions of fairness. Journalists should specify which version of fairness is used to evaluate a model.

Recently, AI developers have claimed their models perform well not only on a single task but in a variety of situations. “One of the things that’s going on with AI right now is that the companies producing it are claiming that these are basically everything machines,” Bender said. “You can’t test that claim.”

In the absence of any real-world validation, journalists should not believe the company’s claims.

Consider downstream harms

As important as it is to know how these tools work, the most important thing for journalists to consider is what impact the technology is having on people today. Companies like to boast about the positive effects of their tools, so journalists should remember to probe the real-world harms the tool could enable.

AI models not working as advertised is a common problem, and has led to several tools being abandoned in the past. But by that time, the damage is often done. Epic, one of the largest healthcare technology companies in the US, released an AI tool to predict sepsis in 2016. The tool was used across hundreds of US hospitals—without any independent external validation. Finally, in 2021, researchers at the University of Michigan tested the tool and found that it worked much more poorly than advertised. After a series of follow-up investigations by Stat News, a year later, Epic stopped selling its one-size-fits-all tool.

Ethical issues arise even if a tool works well. Face recognition can be used to unlock our phones, but it has already been used by companies and governments to surveil people at scale. It has been used to bar people from entering concert venues, to identify ethnic minorities, and to monitor workers and people living in public housing, often without their knowledge.

In March reporters at Lighthouse Reports and Wired published an investigation into a welfare fraud detection model utilized by authorities in Rotterdam. The investigation found that the tool frequently discriminated against women and non–Dutch speakers, sometimes leading to highly intrusive raids of innocent people’s homes by fraud controllers. Upon examination of the model and the training data, the reporters also found that the model performed little better than random guessing.

“It is more work to go find workers who were exploited or artists whose data has been stolen or scholars like me who are skeptical,” Bender said.

Jonathan Stray, a senior scientist at the Berkeley Center for Human-Compatible AI and former AP editor, said that talking to the humans who are using or are affected by the tools is almost always worth it.

“Find the people who are actually using it or trying to use it to do their work and cover that story, because there are real people trying to get real things done,” he said.

“That’s where you’re going to find out what the reality is.”

Sayash Kapoor, Hilke Schellmann and Ari Sen are, respectively, a Princeton University computer science Ph.D. candidate, a journalism professor at New York University, and a computational journalist at the Dallas Morning News. Hilke and Ari are AI accountability fellows at the Pulitzer Center.