To download or read a PDF of this report, visit the Tow Center’s Gitbook page.

Executive Summary

With the rise of nonprofit, foundation-funded newsrooms, the field of Measurement and Evaluation (M&E), which emerged in the international development community, has taken a strong foothold in journalism. As nonprofit newsrooms apply for grants and appeal to donors for funding, they often need to explain in formal reports “how well” their stories performed—not just in terms of impressive traffic but in qualitative evaluations of the impact their reporting had on the world: Did it change a law? Did it move the needle in the conversation? Did it meet the expectations—however defined—the organization had for it? Based on survey research and interviews with newsrooms regarding current impact measurement practices, the researchers designed and built a new analytics platform called NewsLynx to improve upon existing methods of displaying quantitative metrics and to add qualitative information that was previously nonexistent in such tools. Many newsrooms found current analytics tools insufficient for fully capturing their output’s performance. They had trouble seeing comparisons between audience reactions to stories or the effects of their social media and promotional efforts. While they often had multiple data sources—Google Analytics, Omniture, etc.—putting these numbers into context was still difficult.

The NewsLynx Project Implements Three Key Ideas

- NewsLynx seeks to augment metrics with context. It shows how an article performs in comparison to the average of all a publication’s articles and allows comparisons within subsets—all immigration articles, for example, or within any user-defined category.

- NewsLynx also provides efficient tools for tracking, categorizing, and assessing indicators of impact aside from audience reach. Such impact indicators might be legislative reform or community action. This has previously proved extremely difficult and time-consuming. NewsLynx’s “Approval River” functionality aims to reduce the effort associated with managing traditional clip searches and social media searches, which newsrooms use to monitor impact. Crucially, it allows users to apply consistent (and therefore comparable) metadata to impact indicators.

- The NewsLynx developers propose an impact framework that allows for the fact that real-world impact measures are often messy and hard to categorize. NewsLynx implements a framework that offers newsrooms enough structure to categorize “impactful events” across similar boundaries, while also providing enough freedom for them to create their own impact definitions to match particular goals. Importantly, the researchers believe that successful, long-term impact measurement can only result from identifying such organizational goals.

Key Observations and Recommendations

- Effective impact measurement must be tied to an organization’s goals. No amount of technology can help an organization measure what it hasn’t defined as important. Should a newsroom’s reporting seek to change the narrative around an issue? Does it want to reach certain stakeholders or affect lasting reform? Only after an organization has understood what it wants to achieve can quantitative and qualitative tools assess how close the organization is to that goal.

- Both quantitative and qualitative metrics have a place in impact measurement. While quantitative metrics are often vilified as leading journalism astray from its true purpose, the researchers found they do help tell the story of a newsroom’s performance. Although this project began with an interest in giving more visibility to qualitative measurements, its founders repeatedly heard reports from newsrooms that quantitative measurements play an important role for organizations wanting to tell a long-term story of audience growth.

- Newsrooms should better tag their articles. Newsrooms that want to properly understand their own performance over time should put more care into tagging and cataloging their stories. These practices can give an organization a better understanding of its own operations and how much space it devotes to each subject. Tags also offer staff the ability to perform myriad analyses comparing stories and packages. Without differentiating and labeling content, it is difficult to understand patterns in traffic or impact.

- Newsrooms have metrics, but they also still have many questions—particularly about audience. As one newsroom put it, “Google Analytics feels both too complicated and not powerful enough for the questions we want to answer about readers.” Many existing metrics aren’t designed to help analyze metrics from readers’ perspectives—as in, what did they think about the story? Did they leave after the fifth graf because they understood the newsy part of it and didn’t need anymore, or was the site design wrong or the prose too dense? Nor do common tools provide enough insight into that relationship between the news organization and its audience.

- Custom analytics solutions have recently become more feasible. With the continuing maturation of open source analytics pipelines, it is now possible for news organizations to own their entire analytics stack and not rely on third-party vendors for the data-collection portion of their metrics. In other words, the next few years could see newsrooms access much more diverse offerings, providing faster analysis and greater detail more relevant to journalism. That being said, these pipelines are largely for data collection, so most newsrooms will need to design and implement their own custom interfaces to interpret this data for the average reporter and editor.

Introduction

The idea for this project began on a blustery spring day on a visit to an office inside the recesses of The New York Times. The day’s agenda was to better understand the process whereby Glenn Kramon, then the assistant managing editor for enterprise, helped decide which of the Times’s many stories from the previous year should be nominated for the Pulitzer Prizes. His desk was littered with books, printouts, envelopes, and handwritten notes. On the wall hung a proudly framed full-page ad that the Ford Motor Company had taken out, promising improvements in response to the paper’s investigation into SUV rollover deaths in the late 1990s.1 Sights like this weren’t entirely unusual in a building where you can easily find yourself in a hallway covered with portraits of award-winning teams and their front pages. But the scene was striking for the simple fact that here, for lack of a better description, unfolded a crucial step in how the impact sausage was made.

The process was this: Whenever a Times investigation was mentioned in a meaningful way, whether it be a citation by a competing publication, a complimentary letter from a senator, or an official response by a corporation or government, a slip of paper would make its way from Kramon’s desk into one of dozens of large manila envelopes that were filled, hand-labeled, and filed in boxes under his desk. Pulling a seemingly random scrap from one of the envelopes on the iEconomy series—an explanatory series that would later win that category’s 2013 Pulitzer Prize2—Kramon’s eyes lit up. It was a note he had written describing pickup from an unusual source. “I knew ‘iEconomy’ was big when Saturday Night Live spoofed it,”3 he said.

As the conversation progressed to the question of how one might actually measure impact, Kramon reached under his desk again, pulled out an overloaded envelope, and squeezed it to demonstrate its thickness. “Do you want to know what impact is? It’s this right here.” That was to say: at the end of the year the stories with the thickest envelopes—the ones that resonated the most with the outside world—were the likeliest candidates for submission.

How newsrooms conceive of and measure the impact of their work is a messy, idiosyncratic, and often-rigorous process—based at times on what simply seems worth remembering during the life of a story; at others, on strict guidelines of what passes the “impact bar.”

As a result, we conceived of our related research project in two parts: first as an attempt to understand how and through which processes news organizations both large and small currently approach the “impact problem” and second, to see if we could develop a better way—through building a technology platform called NewsLynx—to help those newsrooms fill the proverbial envelope.

Definitions

Before going any farther, we should clarify what we mean by impact. To start, our base assumption is that journalism can and does have an effect on the world and it does so without necessarily becoming advocacy.i Unlike other work on this topic, we don’t offer a strict definition of impact. Based on our research, successful impact measurement can only happen if an organization has identified its institutional goals. From there, it can begin to measure elements that bring it closer to those goals (e.g., encouraging subject-matter influencers to discuss one’s work if the goal is to improve the credibility of one’s reporting). As a result, we created a loose impact framework as opposed to a strict definition.

This approach offered us two advantages. The first is that it exposed newsrooms’ current thinking about what they consider to be important events, allowing us to see the existing differences across organizations. The second is that it served as scaffolding for newsrooms that do not yet have an articulated understanding of their own goals. We found this was the case most often with national and international publications and less so with local, regional, or topic-based ones.

Maintaining standard terminology and vocabulary is a worthwhile goal, however. It goes without saying that the more newsrooms use common terms and tools, the more opportunity exists for contextualizing and understanding a project’s success. In Chapter 5 of this report, Recommendations and Open Questions, we discuss those factors that could make standardization possible in the future.

Our view of impact necessarily incorporates both qualitative and quantitative information. Many newsrooms interested in measuring qualitative events expressed their frustration at the limits of page view-driven decisions. They worried that only high-traffic stories get lauded and, consequently, shape the editorial agenda. In fact, when we started this project one of our main goals was to build a tool to better highlight qualitative events. If traffic is the only measure of success, then how can you show the value of the niche story on an important topic?

Through the course of our research, we also heard examples emphasizing the value of tracking existing and new quantitative measurements. One such story that stuck with us came from a mid-size, nonprofit newsroom producing a mix of investigative, political, and culture reporting. It explained how routine traffic to its stories is orders-of-magnitude higher today as compared to two years ago. The newsroom uses this information to show readers and funders that its organization is trending upwards. Taking this data in the aggregate, and not letting any one number guide strategy, the company is able to construct a narrative about its editorial reach backed by numbers, not discrete and varying qualitative events.

Jonathan Stray wrote about the difficulty of qualitative measurement: “Some events are just too rare to provide reliable comparisons—how many times last month did your newsroom get a corrupt official fired?”5 Numbers do fill a valuable role in understanding organizational health, and we think removing them from the equation eliminates a potentially valuable lens through which to gauge success.

When we say “the impact of journalism” or “the impact of a newsroom’s work” we should also clarify the limits to what we can reliably study—that is to say, where we chose to start research for this project. From cultural commentary to the court reporter on a beat, journalism exists in so many varieties that it can make the question of journalism’s impact seem too large to tackle.

To narrow the scope of our research, our initial target newsroom was the small, nonprofit investigative organization.

Two elements informed this choice. We were most interested in journalism that seeks to address something about the world (this is often investigative work) and, therefore, allows newsrooms to more easily state tangible goals for their projects. For instance, did this illegal practice end? Did the government increase oversight? Are companies now following the law?

The second reason was organizational. Such newsrooms often look to grants or benefactors for funding; and these outside groups often require reports outlining how the organizations have used their money—hopefully guaranteeing that it was well spent. As a result, impact measurement is not a foreign concept to these benefactors, albeit still not an easy one.

We want to stress that these are neither the only nor necessarily the “best” examples of journalism’s impact. For example, looking at how media coverage can shape discourse is another fascinating and worthwhile area of study. We do, of course, remain cognizant of other forms of analysis, such as pre- and post-intervention surveys, which might fold into the NewsLynx platform in the future as more newsrooms adopt increasingly sophisticated techniques of impact measurement. Our focus here, however, is on the current needs and practices of investigative newsrooms.

Previous Research

Although our small, nonprofit variety of investigative newsrooms is on the forefront of the journalism community in thinking about impact, the concept of impact assessment is by no means new. The international aid and development communities have been heavily involved in this kind of thinking for years under the name “Monitoring and Evaluation” (M&E).

At the core of this movement is a simple question: How can we know if our work is having an effect in the world if we can’t measure it? This sentiment is perhaps best embodied in the Bill & Melinda Gates Foundation’s annual letter from 2013, in which Bill Gates, summarizing a passage from William Rosen’s book The Most Powerful Idea in the World, wrote, “without feedback from precise measurement …invention is ‘doomed to be rare and erratic.’ With it, invention becomes ‘commonplace.’ ”6

While we found no definitive history on the rise of M&E within international and non-governmental organizations, as early as 1999 the United Nations Development Programme (UNDP) began a major overhaul of how it conducted aid and development interventions, shifting toward an organization-wide emphasis on a “culture of performance.”7 With this shift, UNDP began mandating that all operations adopt the methodology of “results-based management” in which the effectiveness of programs would be assessed by establishing baselines before an intervention and then periodically collecting data to assess whether the program was working.

In 2000, with the unanimous adoption of the United Nations Millennium Declaration, a major governing document, and the corresponding Millennium Development Goals (MDGs), M&E moved into the mainstream. At the heart of the MDGs were eight objectives to address the world’s most intractable problems, including poverty, access to education, gender inequality, disease, and environmental degradation. Each of these eight objectives was associated with clear, measurable outcomes. For instance, in pursuit of the goal of eradicating extreme poverty, the MDGs pledged to “halve, between 1990 and 2015, the proportion of people living on less than $1.25 a day.”8 While the design of the MDGs came under harsh criticism for (among other reasons) its inability to capture relative versus absolute progress within aid circles,9 the underlying framework of explicitly stating goals and preselecting indicators to judge movement toward those goals soon became the norm.

It was not long until the world of philanthropy followed suit. Over the course of the following decade, organizations of grantmakers focused on realms as diverse as African aid,10 human rights,11 and the environment12 began discussing methods for monitoring and communicating the impact of their work. Reams of toolkits, best practices, and case studies were published on the issue.13

From Monitoring and Evaluation to Media Impact

While philanthropists began adopting the mantle of measurement, they also became increasingly interested in the importance of media for communicating and amplifying the message of their missions. Here we begin to see how the M&E framework that aid and development communities established connects directly with the present topic of measuring media impact.

With the release of high-profile, social-issue documentaries such as Bowling for Columbine (2002) and An Inconvenient Truth (2006), the power of mass media to steer public debate around a topic became readily apparent. In the years following, prominent funders like the Bill & Melinda Gates Foundation, the Ford Foundation, and Open Society Foundations latched onto documentary films as potential means of raising awareness and prompting action on widespread societal problems. From educational reform (Waiting For Superman) to schoolyard bullying (Bully) to fracking (Gasland), many of the most resonant documentary films of the past decade have received foundation support for their production, distribution, and/or associated outreach programs. Whether for purposes altruistic, financial, or both, it is now standard practice for documentary filmmakers to attach social-issue campaigns to their creative works.

In turn, the foundations that supported these films—influenced by their concurrent involvement in aid and development interventions—began requiring filmmakers to provide detailed reporting on the impact of their work. In practice, these reports initially relayed traditional metrics like viewership, ratings, and box-office returns. Yet, over time, they increasingly adopted more sophisticated social science methodologies, employing pre- and post-surveys, frame analysis, and monitoring of mass and social media mentions. BritDoc,14 a foundation established in 2005 to exclusively support social-issue documentaries, lists over 30 of these impact reports published since 2008 in its Impact Field Guide & Toolkit.15

Yet a fundamental difference exists between assessing the impact of an aid intervention versus a documentary film. If your goal is to eradicate polio—as is one mission of the Gates Foundation—it is (relatively) easy to measure the effectiveness of your intervention; simply counting the number of polio cases over time provides a reliable metric of success. If you’re concerned about the influence of confounding factors, like simultaneous development initiatives in the same region, you might design a randomized control trial to test the varying effectiveness of different vaccines, treatments, or educational campaigns. Documentary filmmakers, however, seek more abstract goals like raising awareness, shifting societal norms, or advancing the art form. While academics have attempted to design randomized studies to isolate the effect the mass media has in driving such outcomes, these approaches are limited to highly specific interventions and do not address the need for making comparisons across a variety of contexts.16 Journalism faces many of these same challenges as it increasingly moves toward business models driven by institutional and philanthropic support.

The Rise of Nonprofit Journalism

The last ten years have seen rapid growth in journalistic organizations built on these support sources. A 2013 study by the Pew Research Center identified 172 such nonprofit outlets in the United States.17 Of these, over 70 percent were founded after 2008. While mostly nascent, nonprofit news organizations have achieved considerable impact in this short time. In 2010, ProPublica—founded only three years prior—became both the first nonprofit and exclusively digital news organization to win a Pulitzer Prize for investigative reporting. Since then, the Center for Public Integrity and InsideClimate News have also received the prestigious honor. The Philip Meyer Award, an annual prize for computer-assisted reporting, has awarded its last three top prizes to nonprofit outlets.

Whether because they are unbound from bureaucratic legacies or banner ads, nonprofit news organizations have become beacons of innovation in the industry, regularly exploring and experimenting with new revenue models, distribution channels, mediums, and methods of reporting. This innovative spirit has captured the attention of serious funders such as the Knight Foundation, which in 2013 awarded at least 20 grants to 13 such institutions totaling nearly four million dollars (authors calculations from Knight’s 990s),18 not to mention numerous contributions to individuals and organizations to support the broader journalistic community (one of which has been the Tow Center itself). Some for-profit media outlets are also experimenting with foundation support. Since establishing its Strategic Media Partnerships program in 2011, the Gates Foundation has supported initiatives at The Seattle Times,19 The Guardian,72 and Univision.20

As foundations have entered the fray of journalism, they have brought with them the M&E philosophy inherited from their work with NGOs and the international development field. In turn, the livelihoods of nonprofit newsrooms have become increasingly linked to their ability to collect and report meaningful metrics of impact. Unsurprisingly, the Gates and Knight Foundations remain at the forefront of this movement. In 2011, Dan Green, the head of Gates’s aforementioned Strategic Media Partnerships program, convened journalists, editors, social scientists, and media grantees to share and strategize tools and methodologies for measuring impact. These sessions resulted in the publication of “Deepening Engagement for Lasting Impact: A Framework for Measuring Media Performance & Results.”21 The report offers a comprehensive guide for media makers facing the onus of impact, breaking the process of assessing it into four parts:

Closely following the framework of results-based management, the report instructs media grantees to set goals, define a target community, measure engagement, and ultimately, demonstrate impact. And yet, while this four-step process for measuring impact may appear simple enough, difficulty arises in its implementation. The report suggests the use of custom surveys, interviews with stakeholders, and analysis of data from disparate sources. These are tools that even the largest media organizations struggle to utilize correctly, let alone small nonprofit newsrooms. Many of the report’s proposed methodologies—like using Klout for measuring influential audience members—now appear outdated, even three years after publication. In sum, while comprehensive, the report ultimately did more to confuse and overwhelm its audience than it did to crystallize a direction forward.

Beyond these issues, the “Deepening Engagement for Lasting Impact” report had no response to the problem of scale. By this, we mean the challenge of creating tools and methodologies for measuring impact, which can be applied to more than a single project. Many of the organizations we interviewed for this study struggled with the time and energy required to properly measure their impact. This effort was made all the more frustrating when different foundations asked for different metrics or to report them in different formats. To address this issue, the Gates and Knight Foundations made a 3.25-million-dollar grant in 201322 to the USC Annenberg School of Communication to found the Media Impact Project (MIP).23 At the core of its mission is the promise of “developing processes and tools needed to implement media impact measurement frameworks.” This promise is manifested primarily in the Media Impact Project Measurement System, which has similar goals to NewsLynx.24 It seeks to weave together content, web and social media analytics, and qualitative data into a unified framework for application in a multitude of contexts (the authors of this study have consulted MIP on their work in this domain). While the system has yet to be released, if successful it could be a significant step forward in scaling media impact measurement. The Media Impact Project differs from NewsLynx in that it anticipates outsiders devoting resources to studying a news organization’s operations.

The Quantification of Content

While foundations have played a large role in driving the movement toward measuring media impact, it would be inaccurate to describe this movement as strictly top-down. Journalists and editors are skeptical of seeing the practice of journalism through an increasingly quantitative lens—the page view being the largest example of this (the metric simply counts the number of times an article has been opened). Page views have risen to prominence because they are relatively easy to capture and compare across contexts: a news organization can quickly ascertain which stories are driving the most traffic by comparing their number of page views.

As with any metric, once success is measured in its terms, sites optimize for it. Slide shows, which are designed to generate a page view for each image, are one outgrowth of metrics dictating content and user experience. Some media outlets, such as Gawker, even incentivized their writers by paying them based on the number of page views or monthly unique visitors their articles generated25 (monthly unique visitors is a derivation of a page view that accounts for multiple visits by the same readers). Others saw this shift toward metric-driven decision-making at odds with quality journalism and summarized it as “clickbait.”

The pendulum swing in the other direction started around 2012 when newsroom figures like Greg Linch, an editor at The Washington Post; Aron Pilhofer, then at The New York Times; and Jonathan Stray, formerly head of Interactive News at the Associated Press, began writing about26 and further discussing27 alternative metrics for the newsroom.

That year, Pilhofer arranged for a Knight-Mozilla OpenNews fellow to spend a year working on this question,28 during which a co-author of this report, Brian Abelson, looked at ways to tackle alternatives.29

Many analytics companies have also joined this conversation, oftentimes declaring the “death of the page view” in so doing.30 Responding to skepticism and disdain for the click-driven web, companies like Chartbeat have begun developing metrics based on the time readers spend with an article, rather than the number of instances that article was viewed.31 While “time on page” has long existed within most analytics platforms, its interpretation is difficult since it can be affected by a reader leaving the page open in another tab. “Attention minutes” seek to address these problems by using more sophisticated methodologies to track when a reader is actually engaging with content.32 Many large media organizations like ESPN, Upworthy, and Medium have openly stated that they now prefer attention minutes over page views when it comes to measuring and reporting the success of their content.

However, despite the promise of attention minutes in better aligning the interests of publishers and advertisers, the metric offers little help for truly measuring impact. In an online forum MIP hosted to discuss the relative merits of the metric, Jonathan Stray pointedly asked, “journalism is very much a multi-stakeholder endeavor, so why should we imagine that a single number can capture all aspects of the activity?” In other words, the challenge of measuring impact will not be properly addressed by a single metric. We might even argue that the negative externalities similar to those generated by page views will simply take on new forms in a media landscape dominated by attention minutes. Ultimately, the problem is not the shortcomings of particular metrics—in many ways metrics have greatly improved in recent years. The problem of metrics lies in optimizing newsrooms’ activities around a single figure above all others. Any metric given absolute primacy has the power to overemphasize certain areas and deemphasize others. One of the goals of this research is to add comparison points and context wherever possible to give the most holistic view of the metrics currently monitored—whether they be quantitative or qualitative indicators.

Current Efforts

Our project is certainly not the first or only effort trying to understand impact. Interesting initiatives are taking place on both the qualitative and quantitative side of the equation. Because a comprehensive review is outside the scope of this paper, we’ve chosen to discuss only a selection of projects—with an eye toward ones that have the most similarity to NewsLynx. For a more comprehensive list, see the “Impact Reading List” in Appendix B.

Qualitative Projects

Two projects aimed at the qualitative aspects of impact are the Center for Investigative Reporting’s (CIR) impact tracker and Chalkbeat’s tool called MORI (Measures of Our Reporting’s Influence).34

Center for Investigative Reporting

Lindsay Green-Barber, a post-doctoral ACLS Public Fellow brought on to serve as the organization’s first media impact analyst, designed CIR’s Impact Tracker as a simple online form that journalists and editors can fill out when they believe an investigation has led to a real-world impact. The form prompts its users to describe what happened, when it happened, optional links or documents associated with the event, and to which CIR story it relates. Users then assign the event to one of 17 carefully curated categories, which represent, in Green-Barber’s experience, the full range of potential outcomes from CIR’s work:

- Law change

- Government investigation

- Reader/viewer/listener contact

- Award

- Advocacy organization uses report

- Screenshot of CIR story in media outlet

- Public official refers to report

- Institutional action (firing, reorganization, etc.)

- Change of policy or regulation

- CIR staffer does a public appearance or interview

- Localization of story using CIR data

- Lawsuit filed

- Editorial

- Screening

- Professional organization cites reporting

- Social network share

- Other

The process also classifies impact across three levels of the event’s effect:

- Macro: Stories that have a concrete effect on things like legislation, changes in staffing involving those in power, or allocation of resources to a subject. Examples might include the prototypical impact event: the passage of a new law addressing an investigation’s findings.

- Meso: Stories that influence the general discourse and awareness around a subject. Examples of this could mean increased coverage on a topic at other media outlets or the public organization of a protest.

- Micro: Stories that lead to changes in individuals’ behavior or actions. Examples of this include an individual who writes a letter to his or her congressman or stops buying products revealed to be harmful.

Green-Barber has been able to use the data generated to create analyses35 and reports36 on CIR’s impact. To date, other organizations, like The Seattle Times, have already started using the Impact Tracker.

Chalkbeat MORI

Chalkbeat, an education-focused publication, centers its impact collection around an open source WordPress plugin called MORI that combines article-tagging, event-tracking, and goal measurement.

Before an article is published, staff must categorize the story by type—Analysis, Curation, Enterprise, Quick Hit, etc.—as well as identify the post’s audience—Education Participants, Educational Professionals, General Public, or Influencers and Decision Makers.

If a story is related to a meaningful offline event, staff users can go to that article’s page in their CMS and add a narrative description and an impact tag of either “informed action, the actions that readers take based on our reporting” or “civic deliberation, the conversations readers have based on our reporting.”

Rather than simply reporting raw metrics, MORI works by first requiring editors to predefine goals. In turn, all numbers are displayed in the context of progress rather than performance. This choice was a conscious decision on the part of Chalkbeat’s creators, who were wary of the utility of placing decontextualized metrics in front of journalists or requiring them to track the impact of their stories without being clear as to why they were doing it in the first place.

MORI users can set goals in any of these categories as well: Content Production (e.g., for the number of stories they’ve written across certain focus areas such as teacher evaluations or common core), Content Consumption (e.g., unique visitors, newsletter subscribers), and Engagement (e.g., Facebook fans, offline events hosted by the organization, etc.).

While Chalkbeat had initial concerns about whether its journalists would adopt MORI, its founders were pleasantly surprised by its reception:

Within a week, we were watching conversations unfold in our newsrooms about whether this or that thing constituted an impact. People were eager to tally up the results of our stories. Indeed, reporters and editors quickly began asking how they could sort the data by the stories they had individually produced, a feature we had planned to roll out more slowly.

For more information, their video walkthrough and white paper on the topic are very much worth review.37

Quantitative Projects

The area of quantitative measurement is also seeing a number of new initiatives. The largest trend is what Andrew Montalenti from the analytics company Parse.ly referred to as the “democratization of the data pipeline,” where open source tools are maturing to the point that running your own analytics collection is becoming much easier. This development is notable as it opens the door for direct ownership over analytics information, as well as a lower barrier to entry for custom solutions. That is to say, if a company isn’t happy with the speed, interface, or flexibility of Google Analytics, it could more easily build its own in-house platform. This task is no small undertaking to be sure, but new advances bring it within the realm of possibility. Two projects in this space, Snowplow and Piwik, are worth mentioning.

Snowplow

Fairly new, Snowplow is an open source project that allows users to record user events and store the data on their own infrastructure.38 It’s the best example of the open source “data pipeline” and gives users the real-time speed of something like Chartbeat with the quantity of time-series data that Google Analytics provides. For most of what Google Analytics records, users must wait roughly 24 hours for that data to become available.

The Guardian started using Snowplow in early 2015 for the analytics on its Soulmates and membership pages. As opposed to Google Analytics, which tends to look at the page view as the atomic unit of consumption, Snowplow’s event-based system makes it easier to track user behavior and attach metadata to each action, said Dominic Kendrick, a software engineer at The Guardian. He also appreciates that it provides this data within five minutes of any user action. “The speed and control you have over what is recorded is the biggest thing, because innovation is limited by the speed of the software you implement. If you use a third party, you’re limited to that schedule,” Kendrick said. “Three years ago no one was doing this, but now you have options.”

Importantly, Snowplow concerns itself with efficiently recording and storing event-level interactions with a high degree of customization options—it does not come with a visual dashboard out of the box. Advanced users will see this as a benefit since it means they can create custom visualizations that answer specific questions their newsrooms might have. For others, however, it might feel they’re getting a mere bicycle frame—albeit a robust, free, and versatile one—when what they had in mind was something they could ride out of the store.

Which system makes practical sense will differ based on the resources an organization devotes to analytics, but indeed growth and greater adoption in this area show promise for future iterations of NewsLynx-like systems. Snowplow’s website keeps an updated list of companies currently using the system.39

Piwik

Similar to Snowplow, Piwik is another open source analytics suite.40 In addition to storing raw data, it also provides multiple dashboard interfaces for viewing analytics results. The largest implementation of Piwik we are aware of is for use at OpenStreetMap (OSM), a kind of Wikipedia for mapping the world that relies on open source, community-created mapping data.41

Eric Brelsford, a developer at the nonprofit 596 Acres42 and adjunct lecturer at the Pratt Institute, uses Piwik regularly. “We wanted just what Google Analytics does but in an open source way,” Brelsford said. “It also did a great job of importing our raw traffic data from our server logs so we could see our traffic from even before we had Piwik installed.”

While a number of WordPress plugins exist for CMS integration,43 newsrooms we spoke to had close to no awareness of Piwik’s existence. Vendor-solutions still dominate the field of analytics, but as mentioned above the recent maturation and further testing of these open source tools at scale could change that dynamic in the future.

Internal Newsroom Tools

A number of news organizations have built their own analytics dashboards. While we won’t be able to go over each of them in depth, the links below (and end citations) provide further detail.

NPR Visuals Team’s “Carebot”

Although our primary focus in this report is on investigative newsrooms, other organizations with different goals are also experimenting in this space. Carebot is the NPR Visuals team’s project to capture how their projects, often human-interest and photography-based, affect their audience. “What does impact for a team like ours look like?” asked Brian Boyer, editor of the Visuals team. “We came to the realization that what we create is empathy—we try and make people care about someone else. Carebot is finding ways to measure if we made people care or not.”

An open source project, Carebot focuses on user actions44 (e.g., how many people shared a story or how many liked it on Facebook) but with an added twist: It divides that number by the total unique visitors for a given story. This metric allows the team to say things like, “thirty percent of all people that read this shared it in some way.” Such statements let them more easily compare articles while controlling for variations in page views or total traffic.

The other part of the project involves adding questions at the end of a story, such as, “did you love this story?” or “did you learn something from this story?” If users answer “yes,” they are led to like the story on Facebook or donate to the station. These questions aim to bring people into the public radio family and are the result of thinking about user experience and user flow as a crucial part of the impact question. (Example: After a reader finishes a story, what should he or she do? And if we can think of actions we prefer over others, how can we optimize and measure that?)

“There are stories that are going to have great raw numbers because they are about a celebrity comedian that’s going to host The Daily Show and the controversy about his tweets: that’s just going to succeed,” Boyer said. “So how do you take work that is more to the mission of the organization and hold it up to say, ‘this thing might not have the page views, but it’s doing the mission.’ Carebot is about ‘how do we prove we’re doing the mission?’ ” Indeed, mission-driven metrics is a good label for this type of thinking and, as we’ll discuss later, highlights the crucial intersection between successful impact measurement and stated organizational goals.

NPR’s Analytics Dashboard

Also working at NPR, Melody Kramer and Wright Bryan designed and built an internal dashboard based specifically on the questions their editors and reporters had in the course of a news day.45 As we’ll discuss further, their user-centered design led them to frame visualizations in a friendly and inviting way and served as a great source of inspiration for parts of NewsLynx. Their platform took shape after hours of interviews with staff, focusing specifically on their daily decisions and how technology could help them arrive at smarter decisions faster.

ProPublica

ProPublica is another outlet taking significant strides to measure its impact. In a 2013 report, Richard Tofel, ProPublica’s president, outlined the organization’s approach to tracking impact:

ProPublica makes use of multiple internal and external reports in charting possible impact. The most significant of these is an internal document called the Tracking Report, which is updated daily …The report records each story published …and any prominent reprints or pieces following the work by others (with most of this data derived from Google Alerts and clipping services). Beyond this, the Tracking Report also includes each instance of official actions influenced by the story (such as statements by public officials or agencies or the announcement of some sort of non-public policy review), opportunities for change (such as legislative hearings, an administrative study or the appointment of a commission) and, ultimately, change that has resulted. These last entries are the crux of the effort. They are recorded only when ProPublica management believes, usually from the public record, that reasonable people would be satisfied that a clear causal link exists between ProPublica’s reporting and the opportunity for change or impact itself.46

Tofel goes on to explain that ProPublica tracks these outcomes for years after an article’s publication—“possible prosecutions and fines continue to result from this work long after the reporters involved have moved on to other work, and ProPublica notes these as they emerge.” In tracking the impact of its work, ProPublica has also developed sophisticated tools like PixelPing,47 which allows for measuring traffic to its articles that have been republished on other sites.

The Guardian’s Ophan System

The Guardian uses a custom system called Ophan that is tuned to the needs of editors so that they can quickly see what is being read on the site and start to explain the why behind those trends.48 The system is quite detailed and ever-changing. The linked walkthrough, however, is a good starting point.

The New York Times’s Package Mapper

One typical newsroom-specific problem is how to understand the way readers engage with a package of stories. Editors have ideas about how readers should navigate between these pages, but few teams are tracking how they actually do so. To better understand these flows, James Robinson built Package Mapper to track the multi-story experience.49 Similar to Carebot and miles away from the simplistic page view, this project looks at user experience and user flow as a key part of understanding performance.

Where NewsLynx Fits: Incorporating Qualitative and Quantitative

While there are many current efforts to address the challenges of capturing the quantitative and qualitative impacts of media, there remains a clear need for a comprehensive platform that incorporates these disparate data streams while remaining relatively agnostic about which metrics are viewed. While Chalkbeat’s MORI is an attempt to do just this, its designers have an unusually high level of understanding about their organization’s content and goals, which many newsrooms do not share. MORI does exist as a WordPress plugin, which is likely the best choice if one wants to make a plugin that the maximum number of newsrooms could use, but requiring CMS integration can hinder adoption in many organizations. MIP’s Measurement System promises a similar platform, but it’s still in active development. We designed and built NewsLynx as an attempt to address needs in the present. By framing it as a research project conducted in the open, we hope to share our successes and failures so as to help the media impact community move forward.

Research Findings

Preparatory Research

Our background research unfolded in two parts by way of:

- A survey we circulated online. It was announced via our launch blog post and emailed to newsrooms that the authors assessed as within the demographic of our target newsroom.50

- Focused interviews with newsrooms that fit our target demographic (and some that didn’t).

Our survey (Appendix A) looked at six categories and adapted some questions from a similar survey circulated by Joanna Raczkiewicz at the Harmony Institute in 2013. Newsrooms agreed to participate anonymously and only be identified by their size and general characteristics. The survey focused on the following main areas:

- Organization profile—what size/type of newsroom?

- Content streams—what types of stories (cultural, aggregation, investigative, etc.) and publishing schedule?

- Current quantitative analytics practices

- Current qualitative analytics practices

- Institutional challenges and goals

- Actions—what is this information used for/who are the stakeholders?

The 26 organizations that responded to our survey varied greatly in size and in their prior experience measuring impact. Some employed a small team that generated daily reports circulated to staff, while others had no single person officially charged.

We conducted follow-up interviews, usually an hour to an hour-and-a-half long, from March to July 2014 with newsrooms that had completed the survey and had indicated interest in using the beta NewsLynx platform. It was important that they had websites with which the software could interface, namely an RSS feed and Google Analytics.

Below is a summary of our survey results and more detailed interviews. Going forward, we’ll refer to the target user of NewsLynx as an Impact Editor, or IE, as shorthand to describe the position tasked with data management.ii

What Do Newsrooms Measure and Why?

One of the most surprising sentiments we heard echoed throughout our research was the importance still placed on quantitative measurements such as page views. The reasons for this generally fell into two categories: journalists collected it because donors asked for it, or they measured it because they did see some utility in growth trends, as explained in the introduction.

For organizations that rely heavily on syndication (newsrooms that allow others to copy their articles whole-cloth), “reach” was also a big sticking point. While techniques for measuring this differ greatly, it is generally calculated by taking the organization’s circulation or home page traffic multiplied by a varying, unscientifically derived percentage. This practice might seem blasphemous until one considers Google Analytics—one of the biggest and most popular platforms developed and maintained by arguably the largest and most powerful technology company in the world—which only returns estimates of any given metric. In our experience Google Analytics returns metric values only in multiples of 12, for example. Google Analytics can return more precise values with its enterprise product, but that cost is outside the budget of all but a small number of news organizations, leaving imprecision as often the norm.

Even when organizations acknowledged that both quantitative and qualitative measures were valuable, quantitative measures were still more closely tied to their business models. “We are working to diversify revenue sources and need strong metrics to buttress our qualitative measures,” wrote one growing investigative organization. One broadcast organization summarized this conundrum of revenue versus mission:

In some ways, audio-listens are the single most important thing we can track because that drives underwriting and donations. But we are also mission-driven, so journalism that affects laws and people’s lives and sense of themselves and their relations to others can be equally important.

Although many organizations use quantitative measures, the lack of insight they provide is frustrating. Organizations expressed a desire to find a new metric that could satiate the hunger for quantitative simplicity while offering useful insight, usually in terms of more information about the audience’s relationship to their articles.

In response to the question, “how could measurement help your business or content strategy?” one organization wrote: “A qualitative metric we could present to shareholders showing the ROI of our investment in social media outreach, our marketing efforts, and our dedication to usability.”

To construct such a metric, you would need to agree on some proxy for popularity or discussion level on social platforms (likes, shares, mentions, retweets all come with their own caveats) while taking into account promotional efforts on individual articles. You would then need to segment these results across devices and, if traffic or behavior patterns differ, be able to attribute those differences to either your internal efforts or external factors. This analysis would more realistically be shown in multiple metrics, but the desire for it reflects the need to understand how audiences are reached, along with the pressure to explain what, if anything, is having a demonstrable effect.

The desire to know more about how the audience engaged was echoed elsewhere as well:

From a content perspective, [impact measurement] could help us figure out where to focus our energies, in theory. Given that we are a cash-strapped, resource-strapped nonprofit, should we be spending so much time making a piece of radio and then also adding stunning visuals and writing a compelling text story—or are those just bells and whistles that will get us minimal ROI? What’s the difference between the users who get our stuff on the radio, on the web, and via their phones (on our app or other apps), and are they significantly interested in different kinds of content and do they have different time constraints? What, if anything, might drive people who encounter us out of nowhere on social media to explore our other content, like it, and maybe one day become not just a return visitor but a member? What messaging and coverage encourages participation in user-generated stories (and are those things which can actually help us AND serve the public good?) as well as become part of the public radio family? This is just a start.

Echoed in another organization:

We deeply distrust the page view stat and we see other organizations with more tech resources develop their own fancy metrics such as Medium’s Total Time Reading or Upworthy’s Attention Minutes and can’t help but feel we’re missing out on essential things about our audience. Google Analytics feels both too complicated and not powerful enough for the questions we want to answer about readers. It doesn’t help that Google, Facebook, Twitter, Quantcast, Comscore, and anything else we’ve used never agree on anything. And of course quantifying impact is tough and while we try very hard, some recognized external standards, if wise, could be useful.

We repeatedly encountered the sentiment that existing analytics platforms are “both too complicated and not powerful enough” at other organizations. By “not powerful enough,” users mean that they don’t help answer sophisticated questions that could bolster arguments around, for example, content strategy. Should a radio station continue putting resources into text versions of their stories for the web? Are people not scrolling all the way down the page because the headline and first three grafs were succinctly written and the reader “got it” or because the story wasn’t interesting? Or, is the website’s design—not the journalism—contributing to a high bounce rate? Many organizations would like answers to complex questions like these but, for the moment, data in simpler forms is what they are being asked to report, and technology platforms can’t answer these questions out of the box.

It’s important to point out that some newsrooms completely disregard quantitative metrics or see them as only potentially valuable. As one small investigative organization wrote: “Our mission is to have impact and improve the public interest. For a while we chased traffic and found it negatively impacted our work, and brought no results.”

The pressure to provide quantitative metrics can also be a bit of a moving target—driven by the shifting tastes of funders or changing understanding of what constitutes meaningful measurement. In fact, the Media Impact Project is currently developing a two-sided booklet addressing this very dynamic—what newsrooms are currently measuring on one side and what information funders are requesting (or should be requesting) on the other.iii

Nevertheless, many of these responses influenced our decision to keep a number of quantitative metrics in our system and augment their usefulness through comparison points and context.

What Do Impact Reports Look Like and How Are They Used?

Responses were incredibly mixed on both of these points. While most impact reports are strictly internal, some organizations such as the Wisconsin Center for Investigative Journalism and ProPublica publish examples of their impact on their websites.51 The former also has an ongoing project called Investigative Reporting + Art for which it has commissioned artists to create sculptures inspired by its center’s reporting.52 The pieces then travel the state to schools and other public institutions.

Some organizations only produced reports for foundations that fund them, whereas others produced regular newsletters circulated among the editorial staff—sometimes including one-on-one emails notifying reporters of significant events. Here is an example from a mid-sized investigative and culture publication:

In addition to the qualitative parts of the Board report and grant reports referenced above, we produce a biweekly internal memo that catalogues the qualitative successes of the prior two weeks. We break these up into the following categories:

- Impact: Politicians citing our reporting, law changes, corporate

actions, etc.- Events: Either that we’ve organized or at which our people have appeared.

- In the News: A small selection of the highest profile and most interesting links and citations from other outlets.

- Social Love: A small selection of the highest profile and most interesting social media mentions of our reporting.

- Awards: All the awards we’ve won and been nominated for.

On the more quantitative end is one large-circulation, daily organization based in South America:

I usually relate different metrics of story performance (like time spent versus characters) and section performance (which sections get more or less visits than would be predicted by the amount of stories they publish). Then I go on to analyze what characteristics underperforming and outperforming stories have.

Organizations that keep the editorial team regularly informed of impact events through such reports said that it helps improve morale and keep the newsroom focused on its mission. As mentioned before, these efforts are more successful when the organization has articulated its goals and, consequently, what constitutes important measures of impact for it.

Challenges

Despite advances in understanding what successful impact measurement could look like, the fact remains that cataloging information will always take time and expertise, even with custom-built tools. Technology can solve some of the efficiency problems—many of which we tried to tackle with NewsLynx—but Impact Editors will still be required to make sense of the information. Goal-tracking remains an organizational and cultural challenge, not a technology problem.

As one organization succinctly put it, impact reporting is “time-consuming and measurements of engagement are still elusive.”

Platform Description

We designed NewsLynx around two sets of tasks where staff found difficulties:

- Managing an event-tracking workflow while juggling other responsibilities.

- Understanding what it means for a story to “do well” and what happened to cause that.

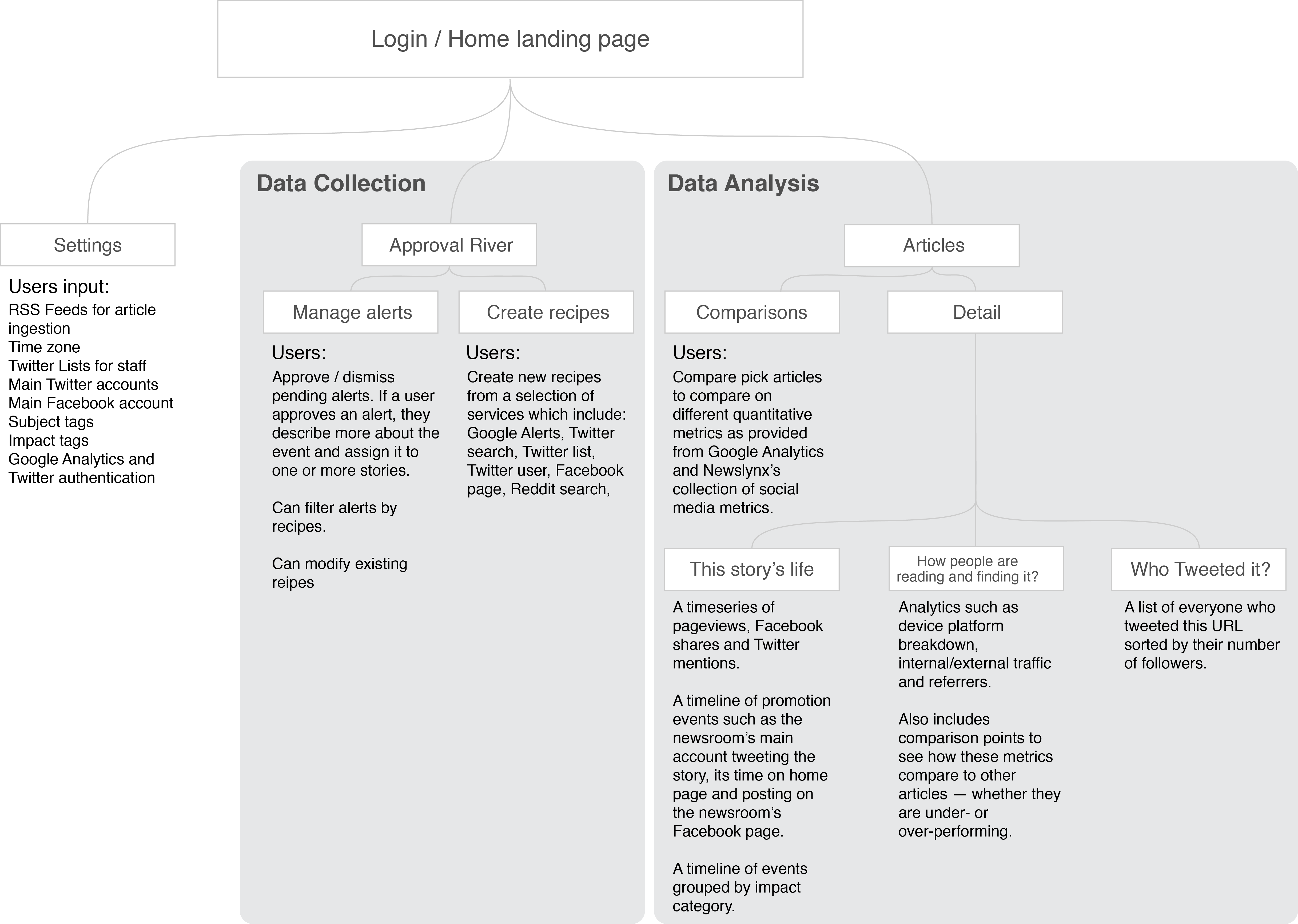

NewsLynx has two main interfaces: a workflow-management tool for collecting and organizing indications of impact (mostly done in a section called the Approval River) and a section for analyzing stories’ impact where comparisons and related metrics can be seen.

Below is a diagram of the site layout. The next sections will go into detail about our impact framework and each of the platform’s interfaces. For a more technical walkthrough and code repositories, please see our GitHub: https://github.com/newslynx.

Recipes: Bots that automatically flag impact indicators from external services.Approval River: Users manage content coming into the system from recipes; meaningful impact indicators are then attached to stories as “impact events.”Subject Tags: Free-text labels to describe the content of stories.Impact Tags: Free-text labels to describe impact within an impact framework to allow for grouping and comparisons.

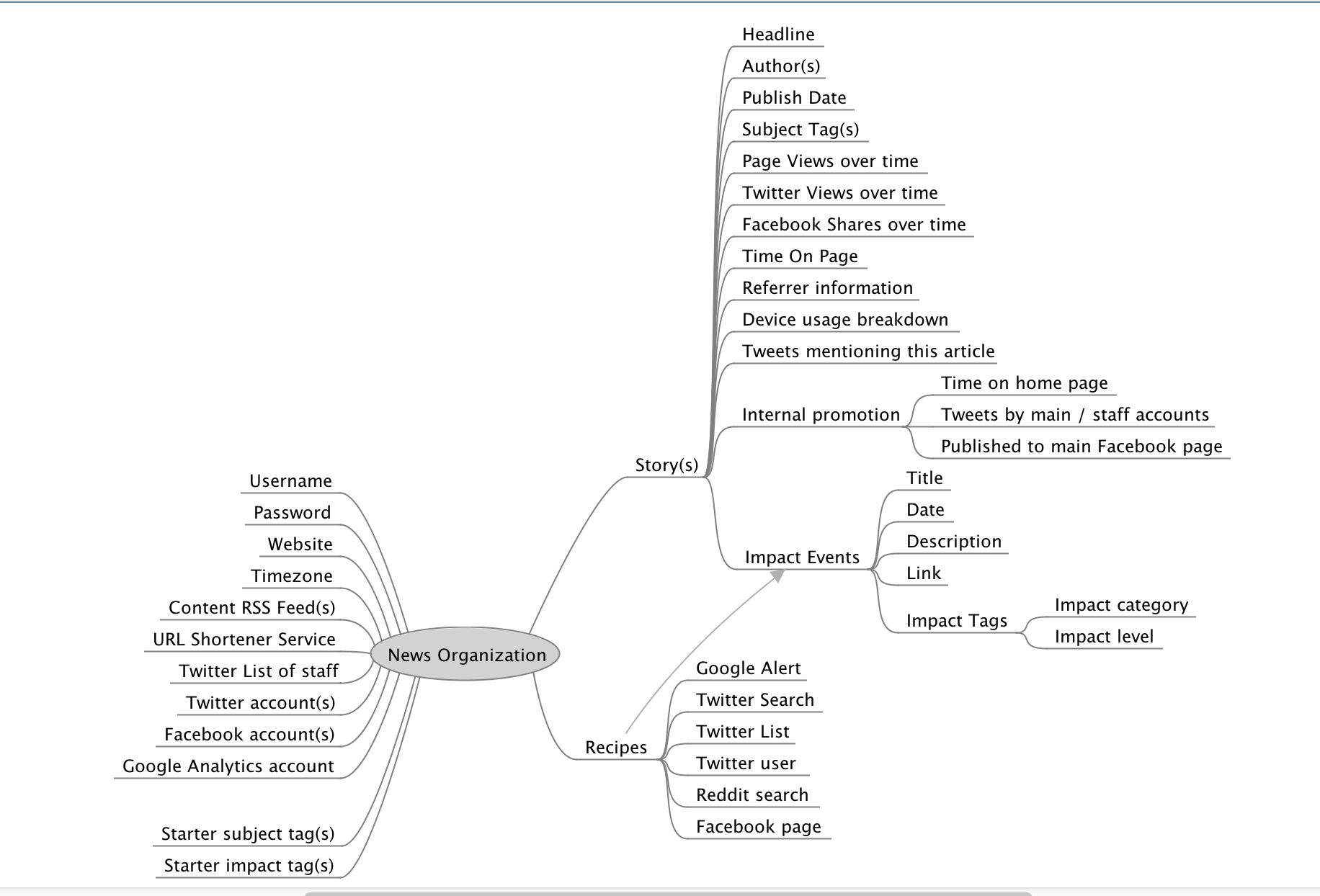

The Model

This diagram shows the concepts that are implemented in the NewsLynx application. Each of the significant concepts is explained below.

Stories: Published output of the news organization.Recipes: The output of recipes automatically populates the Impact Events.

The Impact Framework, as Implemented

A major goal of our initial research was to investigate the feasibility of an impact taxonomy—shared terms that could make sense for multiple organizations.

Taxonomies: On Defining the World

Taxonomies are notoriously difficult to create because real-world data does not necessarily fall into discrete buckets. Take, for instance, Jorge Luis Borges’s Celestial Emporium of Benevolent Knowledge, which divided animals into the following categories:53

- Belonging to the emperor

- Embalmed

- Tame

- Suckling pigs

- Sirens

- Fabulous ones

- Stray dogs

- Included in the present classification

- Frenzied

- Innumerable

- Drawn with a very fine camelhair brush

- Et cetera

- Having just broken the water pitcher

- That from a long way off look like flies

Although humorous, creating any taxonomy inherits the same absurdity and measure of futility. In the news context, we face constantly shifting content types as well as desired outcomes that differ on a per-project basis. In developing our framework, instead of implementing a strict taxonomy, we intentionally left the question of what constitutes an impactful event up to the discretion of the newsroom.

One strategy that gives more comparative power to qualitative taxonomies involves assigning values to each category—making it more like a quantitative measure. Since it’s one of the simplest examples of impact to visualize, let’s see how this type of impact classification would play out around legislative activity.

For example, let’s say that any article that led to a law creation might earn a rating of 10. An article that gets cited by an influencer (however defined) would earn a 6, and so on. This strategy gets tricky, however, since one must quantify just about everything. How many points separate a bill passing, one being proposed, a watered bill that passed but didn’t fully solve the problem, and a bill that didn’t pass and yet spurred vigorous public debate and changed the narrative around an issue? How would one assign points around different assumptions of causality? If Congress is moved to investigate an industry, what of that can you contribute to any one piece of reporting on the topic that came from any one news organization? Would the value-scale seek to capture the strength of that causal link?

To borrow a term from statistics, we think this type of thinking “over-fits” the model to the data. It might perfectly describe one scenario, but it loses all generalizability to new events or events at other newsrooms. To borrow another image from Borges, “On Exactitude in Science” describes the uselessness of a one-to-one mapping between a subject and a frame used to understand it:

…In that Empire, the Art of Cartography attained such Perfection that the map of a single Province occupied the entirety of a City, and the map of the Empire, the entirety of a Province. In time, those Unconscionable Maps no longer satisfied, and the Cartographers Guilds struck a Map of the Empire whose size was that of the Empire, and which coincided point for point with it. The following Generations, who were not so fond of the Study of Cartography as their Forebears had been, saw that that vast map was Useless, and not without some Pitilessness was it, that they delivered it up to the Inclemencies of Sun and Winters. In the Deserts of the West, still today, there are Tattered Ruins of that Map, inhabited by Animals and Beggars; in all the Land there is no other Relic of the Disciplines of Geography.54

Model Concept: Impact Tags

For the reasons explored above in the discussion of taxonomies and for the simple fact that impact measurement must be closely wed to an organization’s goals, we chose broad language to define our impact framework. In our system, each organization is free to make an “impact tag” for any type of event it finds important. For structure, each tag must have both a category and a level, ideas for which we took inspiration from Chalkbeat and the Center for Investigative Reporting (CIR), respectively.

Model Concept: Categories

Chalkbeat’s MORI system has only two categories of impact. An event is evidence of “civic deliberation”—did someone talk about it or discuss the issue in some way?—or an “informed action”—did it, at least in part, bring something about? This structure was useful not only in avoiding the impact rabbit hole described above, but also in disambiguating the term “impact.” For example, some newsrooms call reader reactions or references to their work in legal proceedings “impact.” And while you could argue that the article “brought about” that citation, the state of affairs didn’t change. We felt this distinction between talk and action was an important one to standardize.

In addition to these categories, which we renamed “Citation” and “Change,” we added two more: “Achievement,” which includes articles that win an award, see record traffic, or are cited more than any other story (in effect a meta category); and “Other” to maintain the spirit that NewsLynx is an open research platform and the framework is open to evolution. If trends develop within the “Other” category the framework can and should adapt.

Model Concept: Levels

From CIR we borrowed the idea that an impactful event can happen at different scales. Its tracker uses the terms Micro, Meso, and Macro, as previously discussed. To make these more understandable to the average newsroom and to expand on the idea, we ended up with five levels:

- Institutional

- Community

- Individual

- Media

- Internal

The most novel of these levels is Internal, which recognizes that projects can shift organizational priorities or become models for future work.

The Combination of Tags, Categories, and Levels

Putting these three concepts together, a sample configuration could look like the following:

| Tag name | Category | Level |

|---|---|---|

| Reprint/Pickup | Citation | Media |

| Localization | Citation | Media |

| Influencer mention | Citation | Individual |

| Editorial | Citation | Media |

| Award | Achievement | Institution |

| Increased awareness | Change | Community |

| Staff interview/appearance | Citation | Media |

| Government investigation | Change | Institution |

| Internal discussion | Citation | Internal |

| Policy/regulation change | Change | Institution |

Other Concepts for Possible Inclusion

As the process of research is always ongoing, since NewsLynx’s launch we have had discussions about areas of impact that might be worth including as a part of the default configuration. Kramon, of The New York Times, uses a similar “Change” category as the most important measure, calling it “the gold standard for journalism.” He also stressed the importance of celebrities and humorists commenting on the Times’s work as a sign that its journalism broke out into larger popular culture:

You want everyone from Oprah to Taylor Swift to speak out on a subject and ideally praise the work of The New York Times. I remember once we did a story that Rush Limbaugh praised, and we were able to say, “everyone from Rush Limbaugh to Paul Krugman praised this work.”

Kramon added:

Humor really works, also. If people pick up on it and try and make it funny, that should be a complement to the journalistic organization—even if it’s just a local cartoonist. It doesn’t need to be somebody that’s nationally known.

You could most easily incorporate these ideas with tags in the “Citation” category at the level of “Media” or “Individual.” For example:

| Tag name | Category | Level |

|---|---|---|

| Celebrity commentary | Citation | Individual |

| Humorist/spoof | Citation | Media |

| Pop culture appearance | Citation | Media |

Model Concept: Subject Tags

The other type of classification we employed is the subject tag, which is an open tagging system to assign stories to different editorial verticals. This could also be used to group stories that appear in a series or package. As we’ll discuss in the next section, NewsLynx runs statistics across subject-tag groups so newsrooms can see how different articles or packages perform against other groups.

NewsLynx Interface

The Approval River

The NewsLynx Approval River is a section where users manage impact indicators for stories their newsroom publishes. It allows users to create “recipes” that let them connect to existing clip-search-type services or perform novel searches on social media platforms. The results of these recipe searches go into a queue where they can be approved or rejected.

The tool is designed to streamline (and perhaps replace) an existing common workflow for measuring impact where IEs monitor one or more news-clipping services for mentions, local versions, or republication of their work (if the organization allows that). Many of the IEs we interviewed expressed difficulty in managing the diversity of clipping services they used, as well as storing the meaningful hits in one place. In addition, the process’s complicated nature—often requiring different login credentials for each service—took up an inordinate amount of time and raised the barrier to entry for training someone new on the system.

Out of the box, NewsLynx supports the following recipes:

- Google Alert

- Twitter List

- Twitter User

- Twitter Search

- Facebook Page

- Reddit Search

The Approval River provides easy methods for gathering information from social platforms. For example, one recipe service NewsLynx includes is the ability to search a Twitter List for keywords. Let’s say, as a part of an investigation, your organization has identified 25 key influencers or decision-makers and has added their handles to a Twitter List. A recipe could watch that list and notify you of discussion on the topic, or when anyone on it shares a URL from your site. This alert would show up in the Approval river and, if approved, would be assigned to the relevant article with any other information the IE wishes to add.

A simple way to think of this page is, “if this, then impact.”

With some programming knowledge, anyone can add new recipes or NewsLynx can be set up to receive emails from different clipping services and process those streams as recipe feeds as well.

Analytics Tools

The analytics section is where we hope users can gain insight into metrics that interest them and view any information about an article all in one place.

We designed this section of the platform with two guiding principles in mind: Make it navigable for the average newsroom user and give context to numbers and events wherever possible.

On the first point, we organized our data and presentation at the article level, which is often not the case in platforms such as Google Analytics. We also labeled our graphs and data visualizations with sentences and questions, such as “who’s sending readers here?” instead of more ascetic labels like “traffic-referrers.” On this point we were inspired greatly by NPR’s previously mentioned internal metrics dashboard, which proposes these semantic headers as a way to make dashboards more easily approachable for average newsroom users.55

To understand “how well a story did,” metrics need context. As a result, our other principle was never to show a number in isolation—any value should always be contextualized with respect to some baseline. In our two analytics views—the multi-article comparison view and the single-article detail view—we provide this by always comparing a given metric to a baseline value, such as “average of all articles along this metric.” Users can easily change this baseline to the average of all articles in a given subject-tag grouping. In other words, “show me how these articles performed as compared to all politics articles.”

We also do our own novel data collection to view article performance in the context of newsrooms’ promotional activities. For instance, we collect when a given article appeared on a site’s home page, when any main or staff Twitter accounts tweeted it out, and when it was published to the organization’s Facebook page or pages.

We’ll walk through each section to see how we implemented these comparisons and context views in the platform.

Article Comparison View

When users open the Articles screen, they see a list of their top 20 articles with bullet charts across common Google Analytic and social metrics.iv Bullet charts are so named because they include a small bar, the bullet, that can show whether a given metric is above or below a certain reference point. In this view, users can see which articles are over-performing or under-performing at a glance based on whether the blue bar extends beyond the bullet or falls short, respectively. Users can change the comparison point from “all articles” to any group of articles sharing a subject tag.

To guard against a few high-performing articles skewing the results, the bullet charts use the 97.5 percentile as the maximum value. In addition, users can select the median value as the comparison point as opposed to the average, since the median will be more resilient to outliers skewing results. Users change the comparison point using the dropdown menus at the top of the middle column.

The design of this section is meant for small-batch comparisons between groups of articles. For instance, IEs can compare the seven articles in one investigation against those from another package, or they could compare recent articles against historic performance.

Article Detail View

NewsLynx also lets users easily drill-down to the individual article level to see a timeline of qualitative and quantitative performance, as well as contextualized detailed metrics about traffic sources and reader behavior.

This view also shows top-level tag information, allows users to manually create an impact event, as well as download an article’s data.

The information on this page is divided into three sections:

- This story’s life—A time series of page views, Twitter mentions, Facebook shares, time on home page, internal promotion, and online or other offline events created manually or as assigned through the Approval River.

- How people are reading and finding it—A selection of Google Analytics metrics around platform breakdown, internal versus external traffic, and top referrers. Similar to the comparison view, each metric includes a customizable comparison metric.

- Who tweeted it?—A comprehensive-as-possible list of accounts that have tweeted the article sorted by the account’s number of followers.v

In the interface, these three sections described above are prefaced by the text, “Tell me about…” in an effort to make their use and functionality readily apparent to the user.

This Story’s Life

This visualization aims to combine the relevant information for better understanding why a story performed the way it did. We chart page views over time within the context of a newsroom’s internal promotion efforts. The orange blocks are time on home page, the light blue dots are when the main or staff Twitter accounts tweeted the story, and the darker blue dots are when the story appeared on the main Facebook page or pages. Similarly, any events added through the Approval River or created manually appear on the time series grouped by category. Events that exist across multiple categories are shown once in each relevant category row.

Any impact events that have been treated on this article appear below in a list filterable by impact tag, category, level, and date.

How People Are Reading and Finding It

We consider this one of the most useful pages in the platform. It displays the metrics in Google Analytics that newsrooms expressed most interest in or need to report:

- What device people were reading on

- Whether traffic came from external or internal sources

- Who referred traffic

The functionality and design mirror the bullet charts in the comparison view, allowing users to see these numbers in relationship to all articles or a specific group of articles. Hovering over the bullet chart’s marker will display the comparison value in a tooltip. The referrer information is particularly useful when IEs are asked to figure out the source of traffic for a popular story.

Who Tweeted It?

This section shows a list of everyone who tweeted a link to this article as obtained by querying the Twitter Search API on a regular basis. The list is sorted by the number of followers the account has in descending order. If a Twitter Search recipe in the Approval River wasn’t set, for example, or someone previously not on the newsroom’s radar tweeted a link, IEs could look here and create a new event using the button on the top of the page.

Users can also export all of this data using the button at the top of the page. Importantly, the back-end data-collection code is separate from the front-end interface. As a result, the data collection is completely agnostic about how it is displayed, allowing a newsroom to design its own custom views or visualizations of data. What we’ve produced here, directed by our research, is a first attempt at giving newsrooms the broad view of their stories and packages as they relate to one another, as well as easy-to-use, drill-down capabilities for when they need to explain the narrative behind how a particular story performed.

Newsroom Use

After four months of development, we launched NewsLynx in October 2014 with roughly six newsrooms. They varied in size from half a dozen people to a microsite within a large metro daily. Most, but not all, already had impact workflows that they used to generate reports for either grants or on an internal reporting schedule. The newsrooms with existing workflows also tended to be nonprofits. Just as with the newsrooms that responded to our survey, these organizations agreed to participate anonymously and only be identified by their size and general characteristics.

In this section, we detail how the participating organizations used NewsLynx and what that can teach us about impact measurement best practices and future newsroom adoption.

Approval River Usage

Newsrooms mostly used the Approval River as a way to track mentions of their work by influencers on social media or by other publications. Some newsrooms leveraged Twitter Lists to a large degree. They set up recipes with their domain name on “Presidents-HeadsOfGovt,”56 U.S. government officials,57 or the Justice Department’s list of U.S. attorneys.58 They would typically go through the Approval River once a week and categorize possible hits.

IEs also used Google Alerts recipes to look for pickups of their stories by other organizations or mentions of their founders or board of directors. We weren’t able to completely replace existing workflows, however. One challenge we faced was that some clipping services changed during the development of the platform, and we didn’t have the time to fully implement new service recipes. For example, according to some IEs, the quality of Google Alerts has decreased in recent months and their organizations now use other clipping services to track mentions. We discuss improvements to this problem of shifting technologies in the chapter Future Paths for NewsLynx.

In terms of affecting efficiency, participant newsrooms reported that it helped streamline their clip-search tasks and, in the case of Twitter List searches, helped them surface elements they wouldn’t have otherwise seen.

Article Section Usage

Participants reported that the most useful area of this section was the “How people are reading and finding it” on the detailed article view. They said that it helped them explain to the newsroom “how well an article did,” especially in relationship to a meaningful baseline. This page also provided links that helped IEs record which sites or accounts had picked up or were linking to the original article. Similarly, the full tweet list helped participants create events that they would have missed without a recipe set up to catch them in the Approval River.

Understanding traffic sources was also a main takeaway from the Facebook and Twitter time-series charts. As one IE put it:

When there’s a spike in traffic for any story, it’s super handy to be able to quickly see a list of where traffic is coming from. For example, I noticed we had a story that was getting crazy traffic and NewsLynx said it was coming from Facebook. I could then find the origin post quickly.

This finding was encouraging since Facebook is particularly opaque in seeing specific activity. Knowing that our novel metric of Facebook shares over time can provide useful insight is an important takeaway.