Set aside for a moment concerns that the Russians are undermining our democracy, and let’s pause to admire their handiwork. Seen through a Silicon Valley optic, they are remarkable media innovators.

They’ve disrupted the tech sector so thoroughly that even Facebook and Google are scrambling. You can picture the approving nods they would get in West Coast boardrooms as they describe their return on investment, new market opportunities, and plans for global expansion.

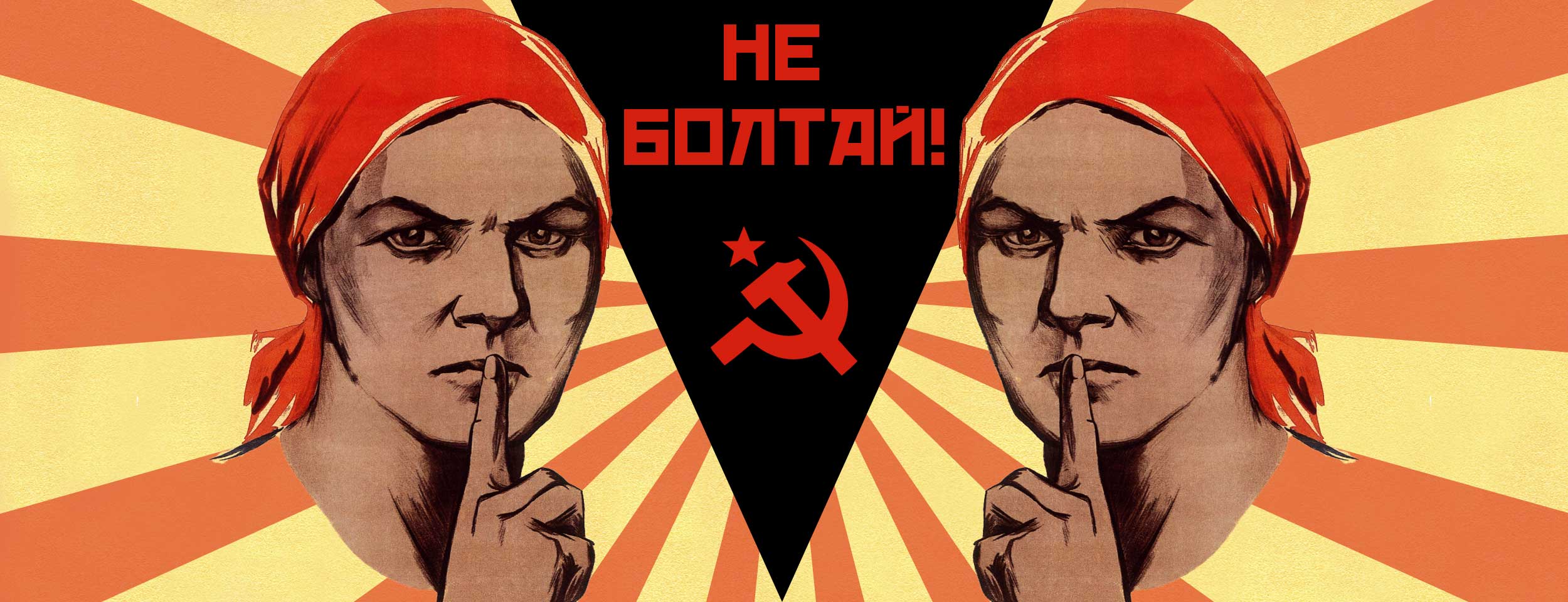

Their accomplishments are impressive. They created new forms of expression, and tapped into audiences others overlooked. Perhaps the culture of the hackers they’ve bribed or coerced into working for them is rubbing off. Early on, the Russians understood that the information flows and consumption patterns of social media networks have a profound impact on human behavior and the formulation of judgments and beliefs, and began investigating how this might work for them.

They’ve been refining their current media models since 2008. As Facebook reached 100 million users and Barack Obama connected directly with voters via online social networks, the Russians began to update their disinformation strategies for the digital age. They do much of their beta testing in former Soviet republics that have become fragile new democracies. In this region one can most clearly see the arc of their development.

Russia began field tests during its 2008 military conflict with the former Soviet republic of Georgia. When Moscow invaded Ukraine in 2014, seizing and then annexing the Crimea, it continued to work on an integrated approach of legacy and new media, with a special focus on broadcast media. In most former Soviet states, most citizens get their news from television. Well-financed state and private Russian-owned channels or Russian-language channels with close ties to Moscow help shape the public agenda.

Oleg Khomenok, a Ukrainian journalist and board member of the Global Investigative Journalism Network, describes how some of these new approaches unfolded during the Ukraine conflict: Toward the end of 2013, as demonstrators filled the streets to protest former President Viktor Yanukovych’s turn toward Russia and away from Europe, Russian television channels introduced semantic changes. They called the protesters “radicals,” “extremists,” and “nationalists”–the latter term often used in Ukraine as a synonym for fascists. The Ukrainian government became a “junta.” Pro-Russian elements turned into “patriots.”

These changes are part of a more subtle and efficient strategy referred to as “shaping the narrative.” The Russians discovered it was enough to inject a drop of poison into the information bloodstream and let biology do its work. For example, says Khomenok, a news report might contain true facts alongside a false one. Other news reports might have true facts but false conclusions.

They’ve also pioneered new, computerized ways to pollute the information ecosystem. The Russians were among the earliest to massively scale up and deploy trolls and bots, Potemkin communities of fake users that support or oppose various positions.

They also iterate. This past fall the Russians applied lessons learned from these earlier experiments not only to the US election campaign, but also to Moldova’s fall 2016 presidential elections, customizing messages for different platforms and creating synergies between them. Many local Moldovan journalists thought the candidate who favored European integration, a policy with broad public support, would prevail. But during the campaign, fake news stories appeared claiming she wanted to allow tens of thousands of refugees to enter Moldova, something she’d never said. Broadcast media joined in. “Experts” sympathetic to Moscow discussed the “migrant threat” on pro-Kremlin television channels.

Leaving nothing to chance, the Russians build redundancy into their media models. In case the migrant angle didn’t get traction, Internet sites also spread rumors that the pro-Europe candidate, a single woman, favored same sex-marriage. Church leaders entered the fray. The upshot? The pro-Russian candidate won.

Russian propagandists think outside the box. No idea is too outlandish. One imaginative approach involves television news reports featuring interviews and eyewitness accounts of fake events. A favorite theme is atrocities involving children. As Russia was invading and annexing the Crimea, one of Russia’s most-watched TV stations interviewed a Ukrainian woman who said she witnessed the Ukrainian army crucify a small boy in the city of Slovyansk, then tie his mother to a tank and drag her through the streets.

Honestly, who can even think up this kind of stuff? Here’s a clue. Viewers detected similarities to a storyline from Game of Thrones.

It’s possible to debunk these stories, as two Russian journalists did with the crucifixion tale. But with this elegant model, in almost every scenario Russia wins. Even if people see the correction, Moscow has moved on to something else. And it keeps journalists busy. Facebook’s European media chief has compared debunking fake news to a game of “whack-a-mole.”

Occasionally, the Russians overreach. Lithuania, a prosperous Baltic state of 3 million, was the first Soviet republic to declare independence from Moscow. Though it’s now a member of the European Union and NATO, Russia still meddles. But a recent report by one of Russia’s largest television channels backfired. The report claimed the country faked the dozens killed and injured during Lithuania’s fight for independence from Moscow. Lithuanian journalist Skirmantis Malinauskas published a dossier of documents, photos, and videos to refute the Russian claim. Lithuania blocked some of the channel’s broadcasts for several months, and a cable operator dropped the Russian channel from its lineup.

The Russians also follow Silicon Valley’s playbook when they face setbacks: They “fail fast” and pivot. In the case of Lithuania, they chalked it up as a low-cost way to try out new storylines, approaches, and platforms, for application to larger markets in the future. Despite tensions, Russian entertainment channels remain popular in former Soviet republics.

Western democracies are struggling to respond to these strategies. A recent report by the Center for European Policy Analysis, a Washington think tank that studies Russian disinformation, stated candidly, “…we simply do not know what works.”

Last month, in addition to announcing Russia sanctions, President Obama signed into law a defense appropriations bill that included the bipartisan Countering Disinformation and Propaganda Act. It provides tens of millions of dollars to fund US government responses to Russian disinformation and civil society efforts overseas.

Almost instantaneously, the well-oiled Russian machinery roared into action. A bevy of obscure websites began “shaping the narrative,” claiming Obama had signed a law making alt-right websites illegal, a charge Snopes.com debunked. Clever angle, don’t you think?

It’s likely more effective responses will come from fighting fire with fire–more and better innovation, new collaborations, and creative approaches, than the top-heavy bureaucratic measures now under discussion. The diverse skill sets and approaches of a vigorous civil society and free press, if combined with structural reforms by the tech companies that control platforms and the way information flows through them, may produce better results.

Ukraine offers an example. During the Russian invasion, faculty, students, alumni, and community volunteers at the Mohyla Journalism School in Kyiv collaborated to create the website StopFake.org. Supported by crowdfunding and donations, it has become a kind of university-based Snopes.com. Some of their supporters are even in Russia.

They invite the public to send in rumors and suspicious news stories which they fact-check and publish. They also supply video to major Ukrainian television channels and online news sites. In just a few years, StopFake.org has grown dramatically. Its team now publishes in 10 languages, researches trends and patterns, maintains archives and databases, and trains journalists and politicians in other former Soviet countries. Several months ago it became part of the First Draft Partner Network, alongside Facebook, Google, Twitter, and global news organizations like CNN and the BBC.

The Russians have shown they can be agile. They seem to anticipate every move. One could see it as an intriguing challenge for the best Silicon Valley has to offer.

Correction: An earlier version of this story misspelled the name Oleg Khomenok.

Louise Lief is Scholar in Residence at the American University School of Communication Investigative Reporting Workshop.