Sign up for the daily CJR newsletter.

Researchers who study AI and journalism are identifying a striking tension: people who use AI chatbots such as ChatGPT and summarization features such as Google’s AI Overview to find news seem to prefer them over clicking on individual stories, despite being conscious that chatbots have limits when it comes to accuracy.

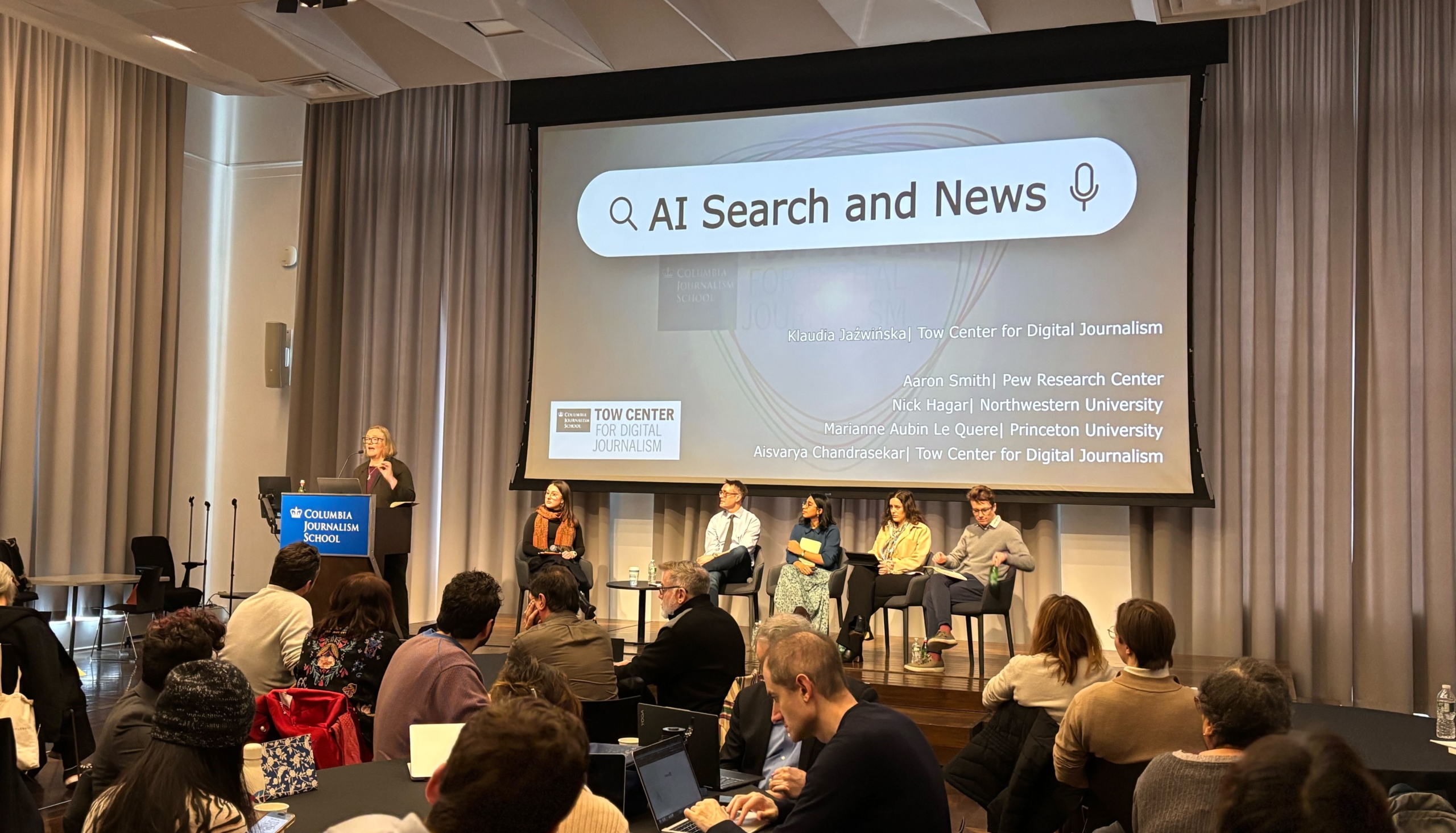

“They know the answers they are getting are not perfect,” said Nick Hagar, a postdoctoral researcher at Northwestern University’s Generative AI in the Newsroom (GAIN) initiative, as they previewed new research during a recent Tow Center panel on AI search and news. “But it’s just so convenient for them to be able to ask a quick query, have the AI search, and get a nice, condensed response, that the trade-off is worth it for a lot of these folks, for most of what they’re doing.”

Hagar said, of twenty participants interviewed, all said that they preferred using AI over going directly to a news publisher to find information. Participants described AI platforms as more convenient, less biased, and better at delivering comprehensive information efficiently. These findings align with a recent interview study of fifty-three people from the Center for News, Technology, and Innovation (CNTI), in which participants said they preferred how chatbots let them tailor information to match their focus.

Because both GAIN’s and CNTI’s studies interviewed only small samples, their findings cannot be generalized to all news consumers. Still, it is notable these independently conducted studies had such similar findings. Both studies showed, too, that people prefer AI tools for news because they deliver a streamlined experience over publisher websites filled with ads, paywalls, or noise. People also like to feel in control of how they receive information: they can ask follow-up questions, challenge ideas, and request context that news articles may not always provide.

Yet this sense of ease and agency can be misleading. Last year, the European Broadcasting Union published a tool kit outlining common ways AI outputs can misrepresent news reporting, including by mixing up or inventing details, omitting important context, conflating opinion with fact, and relying on incomplete or inappropriate sources. AI systems also struggle to distinguish between source types. In past research, Hagar found that models misinterpreted information in ways that journalists would not. Unlike a trained journalist, an AI model is “not able to really differentiate between the level of expertise, trustworthiness, credibility in those sources,” they said. “It flattens everything into just one equal plane of source material.”

Even when people are cautious about chatbots’ accuracy, they may be swayed by signs that the bots should be trusted, such as confident-sounding language. Research from the Tow Center, for example, has found that AI systems often fail to appropriately hedge or qualify the answer to a question: responses may sound certain when incorrect or tentative when accurate. Hagar and colleagues observed much the same.

Users’ trust in AI outputs may also be influenced by the presence of citations of news organizations that audiences trust. Participants in the GAIN study reported scanning citations for outlets such as the New York Times or CNN as shortcuts for credibility—often without clicking on the links. “We had participants explicitly say, ‘It’s a transitive property. I can trust CNN, so therefore I can trust what this AI is telling me,’” Hagar said. But citations aren’t always markers of trustworthy content. In the experiment that the Tow Center ran, we observed that chatbots misattributed quotes, fabricated links, and sometimes pointed to syndicated or copied versions of articles rather than originals.

More broadly, the usefulness of AI outputs depends on the underlying information environment. Research previewed during the panel by Marianne Aubin Le Quéré found that queries about news deserts—for example, asking about crime statistics in a given county—returned shorter, less specific Google AI Overview summaries that leaned heavily on large databases and aggregators. The data might not necessarily be inaccurate, but it reflected gaps in local reporting.

At the same time, the pool of accessible articles is shrinking, as many publishers have tried to block AI crawlers because of concerns about unauthorized use or misrepresentation. Readers may not realize the information they are receiving is incomplete.

The good news is, people are not relying on AI indiscriminately. Hagar pointed out that the participants in their study described using chatbots to get quickly oriented on a topic, even as they continue to rely on authoritative outlets for deeper research or high-stakes stories. Research further suggests convenience has not replaced the need for journalism produced by humans: according to the CNTI study, interviewees view AI chatbots as information supplements, rather than as substitutes for reporting. Last fall, a report from the Reuters Institute also identified a “comfort gap” between AI- and human-produced news: on average, only 12 percent of respondents felt comfortable with “fully AI-generated” journalism.

At our event, Aubin Le Quéré said that chatbot-mediated news consumption could ultimately benefit audiences—particularly younger people who report feeling overwhelmed by the volume and negativity of the news—if designed responsibly. For now, the technology is far from ready to serve as a primary gateway for news discovery. But there’s a lesson in all of this for news publishers—if people can access news in a simple, easy-to-understand way, they’ll do it.

Has America ever needed a media defender more than now? Help us by joining CJR today.