Sign up for the daily CJR newsletter.

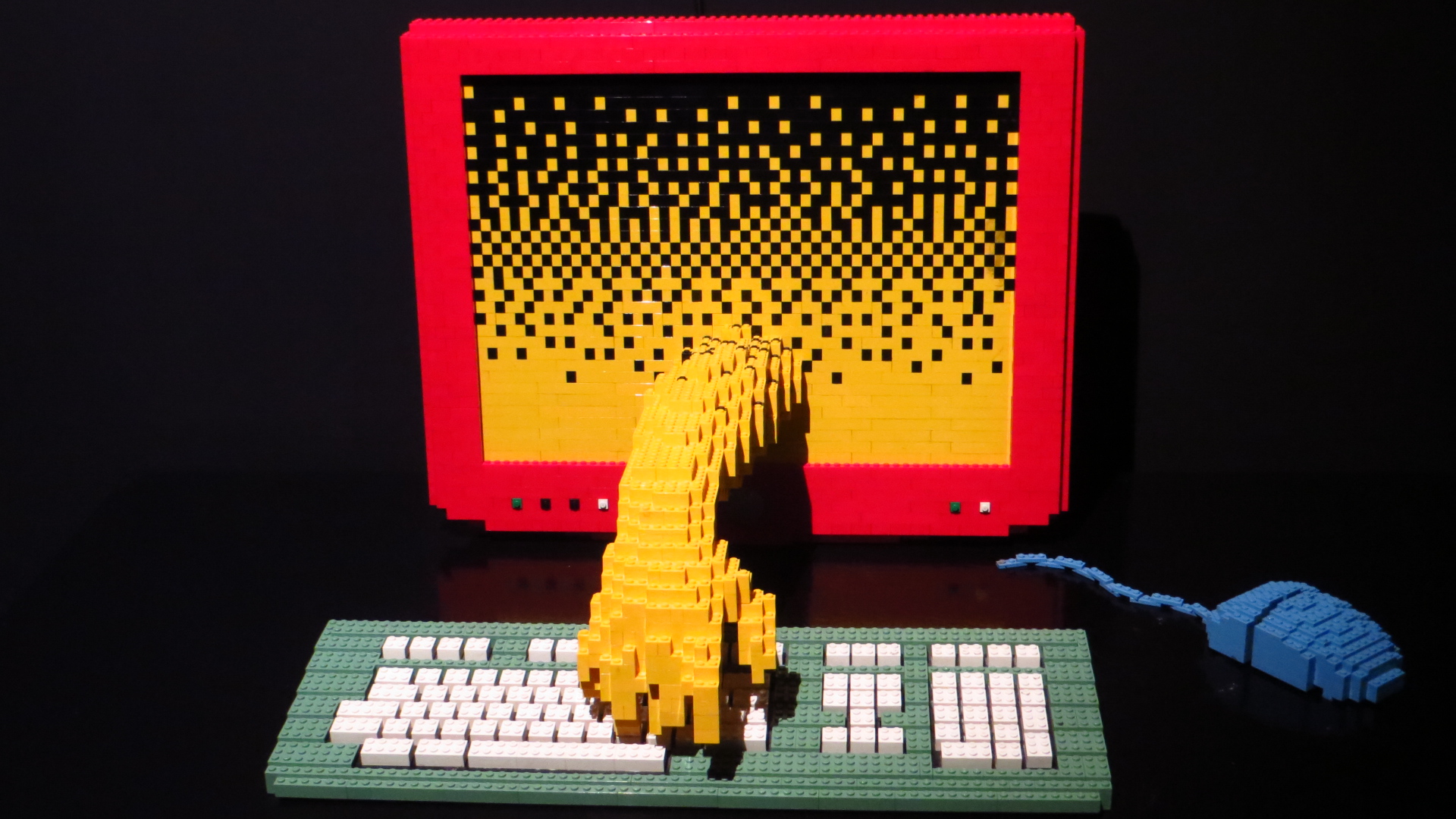

Last month Google CEO Sundar Pichai took the stage at the company’s yearly developer festival for a whiz-bang demo of their latest technology, Google Duplex. Audience members were awed as Duplex’s artificial intelligence (AI) undertook seemingly natural conversations with real people as it successfully booked a restaurant reservation and a salon appointment. (Listen to an example here). After the initial exuberance, critics pointed out various ethical issues the technology exposes, like undermining informed consent to record a call and opening the door for further weaponization of robocalls.

But assuming these ethical concerns can be surmounted, I can’t help wonder: What might this technology offer for reporting, and where is it still too limited? Will AI, for instance, be able to make calls on behalf of journalists? What kinds of new issues might it expose for the practice of journalism?

AI technology, including Duplex, can only converse naturally about a set of narrowly constrained domains where training data is plentiful. Google’s conversational AI was initially engineered to have conversations about just three things: making restaurant reservations, scheduling hair salon appointments, and getting holiday hours. There are only so many ways to respond when asked about business hours, or to book a table at a restaurant. These are targeted tasks where the conversation is more or less scripted.

So what types of calls do reporters make that might fit such constraints?

The answer: More than might be obvious at first. I posed this question to students in my Algorithmic News Media course at Northwestern University this spring, and we managed to brainstorm quite a few possibilities. There are a variety of unexpected but routine events that get reported on every day in the news, such as fires, sports, and crimes where basic structured information about the event could be collected by such a system. In crisis events like floods or storms, automated calls could be used to sample a geographic area and ask about damage or food and water availability.

ICYMI: As publishers pump out repetitive content, quality reporting suffers

AI could also be used for fact-checking; the system could call sources to confirm quotes or facts. Or it could be used in other reporting scenarios: Automated interviews would allow for more comprehensive data collection, such as canvassing a neighborhood to find individuals affected by a local policy change, routinely updating events calendar databases, or staying on top of local commercial real-estate developments, to name just a few.

Duplex-like interviewing technology could also enhance existing automated news writing systems. The Associated Press, for instance, publishes thousands of automatically written earnings release articles every fiscal quarter. Sometimes those automated reports just function as stubs—they’re a starting point. AP generates these as a way to get something out on the wire quickly and cheaply, with reporters sometimes circling back to write-through the article. That might include getting a quote from a corporate executive like a CEO to enrich the story with context and perspective, or digging out an unexpected figure from an obscure but important division. But just soliciting a comment on something like an earnings release could likely be delegated to an automatic interviewer.

All automated news writing relies heavily on data. To the extent that Duplex-like technology can lower the cost of collecting data, we can expect it to enable more automated, structured, and data-driven journalism. United Robots, for example, gathers the data and provides the automated writing software that powers a site called Klackspark covering all divisions of local men’s and women’s soccer in Sweden. Instead of having part-time workers call the stadiums after each match to ask about outcomes and scores, Duplex technology could gather that data automatically and efficiently. While some low-skill jobs might go away, higher-skill jobs for contextualizing the data and editing the automated systems and outputs would emerge.

To step back a bit, the technology is potentially useful in journalism wherever simple information solicitations are required (and where there is an obligation to answer, for instance, from public officials) or where the incentives of an interviewee are aligned with honestly answering a question or providing data. While this represents a narrow slice of the conversations that reporters have on a day-to-day basis, there are still some opportunities there. News organizations that see a future in data-driven, structured, and automated content should invest in adapting Duplex-like technology to better suit journalism.

READ: One conservative group’s infiltration of the media

Of course, as any reporter will tell you, interviews often require not just blindly recording responses, but instead demand adversarial push back on falsehoods, follow-ups to clarify facts, or re-framing unanswered questions to press for responses. If information is sensitive, complex, uncertain, or apt to be misunderstood, it’s best to have a human reporter on the job—not to mention that merely gaining access to a source may rely on the development of a trusting rapport. An automated interview call will have none of that.

Nor will auto-interviewers have the authority that comes from the countenance of a real person, with a real reputation who confronts a source with a difficult question. Moreover, this technology easily has the potential to be diluted. If 10 (or 100) different news organizations sic their automated interview bots on a source, will that source feel obligated to respond or will they just hang up? And will news media then create a digital version of a media crush that can traumatize communities like the church shooting victims in Sutherland Springs, Texas?

It’s unclear how interviewees will react to automated interview technology in different scenarios. Everything from the gender and tone of the synthesized voice to the personality and name used could be consequential to how people respond. Should a news organization consider a diversity of automated interview voices, names, and genders, or choose the one most likely to optimize for response rate?

Recalling the unfortunate early demise of Microsoft’s Tay bot, which trolls turned into a racist misanthrope in less than a day Duplex-like automated interview technology also needs to grapple with the fact that people often clown around with bots. Humans like to test the limits of the technology, to stump it, break it, and get it to do things it wasn’t designed to do. Such technology needs to be bulletproofed for journalistic scenarios.

It’s important to try to see through the hype of AI technologies that companies like Google are incessantly peddling. Duplex is still quite limited. But I’m optimistic that there are productive and useful journalism scenarios that would benefit from the technology. Plenty of research and prototyping awaits to test its limits empirically and see where it succeeds.

Has America ever needed a media defender more than now? Help us by joining CJR today.